ChatGPT vs Google Gemini vs Claude AI: Real-World Task Performance Battle (Tested on 50 Tasks)

Table of Contents

- Introduction

- The Three Giants of AI Assistants

- Testing Methodology: 50 Real-World Tasks

- Coding and Programming Performance

- Creative Writing and Content Generation

- Data Analysis and Research Tasks

- Multimodal Capabilities: Vision and Beyond

- Conversational Intelligence and Context Understanding

- Speed, Reliability, and User Experience

- The Verdict: Which AI Wins?

- FAQ

Introduction

Marcus Chen sat in his home office on a Tuesday morning in January 2026, facing three browser tabs simultaneously displaying ChatGPT, Google Gemini, and Claude AI. As a freelance software developer and content creator, he had spent the past six months switching between these AI assistants, never quite certain which one truly deserved his loyalty and monthly subscription fee. Each platform promised revolutionary capabilities, touted impressive benchmark scores, and claimed superiority over competitors. Yet Marcus found himself increasingly frustrated by the disconnect between marketing promises and actual performance on the tasks that mattered to his daily work. The breaking point came when a critical project deadline loomed and each AI assistant provided dramatically different solutions to the same coding problem, forcing him to spend hours verifying which approach actually worked correctly.

While the following demo provides a comprehensive comparison between ChatGPT, Gemini, and Claude across 11 key categories, there is still AI information and strategies lurking in the exclusive details at the bottom of this article – insights you may not have discovered yet:

The artificial intelligence landscape has transformed dramatically since early 2025, with OpenAI’s ChatGPT, Google’s Gemini, and Anthropic’s Claude emerging as the dominant consumer-facing AI assistants. Each company has invested billions of dollars in model development, infrastructure, and competitive positioning, creating an intense three-way race that benefits users through rapid innovation and capability improvements. ChatGPT pioneered mainstream AI adoption with its conversational interface and broad accessibility, accumulating hundreds of millions of users worldwide. Google Gemini leveraged the company’s massive computational resources and integration with existing Google services to create a tightly integrated AI ecosystem. Anthropic’s Claude carved out a distinctive position by emphasizing safety, nuanced understanding, and enterprise-grade reliability that appeals to professional users and organizations with stringent accuracy requirements.

The challenge facing users in 2026 extends far beyond simply choosing the AI with the highest benchmark scores or most impressive demo videos. Real-world performance depends on countless factors including the specific nature of tasks being performed, the user’s workflow requirements, integration capabilities with existing tools, cost considerations across different usage patterns, and subjective preferences around interface design and interaction style. Benchmark scores measuring performance on academic datasets often fail to capture the nuanced differences that emerge during actual daily usage, where factors like consistency across varied prompts, ability to maintain context through long conversations, handling of edge cases and unusual requests, and graceful degradation when facing ambiguous or incomplete information become critically important.

Marcus decided to conduct his own comprehensive testing rather than relying on corporate marketing claims or isolated user testimonials that might not reflect his specific needs. Over three intensive weeks in late December 2025 and early January 2026, he designed and executed fifty distinct real-world tasks spanning the full range of capabilities these AI assistants claim to possess. Each task represented actual work he regularly performs or problems his clients frequently encounter, ensuring practical relevance rather than artificial benchmark optimization. The tasks included complex software debugging requiring multi-file analysis, creative content generation for marketing campaigns, technical documentation creation, data analysis and visualization, research synthesis across multiple sources, conversational assistance for brainstorming and planning, multimodal tasks combining text and images, and edge cases designed to test handling of ambiguous or contradictory instructions.

The testing protocol Marcus developed prioritized consistency and fairness across all three platforms. Each task received identical input prompts across ChatGPT, Gemini, and Claude, with careful attention to avoiding platform-specific optimizations that might unfairly advantage one assistant. Tasks were distributed across different times of day to account for potential performance variations due to server load or model updates. Multiple trials of critical tasks ensured results reflected typical performance rather than outlier responses. Marcus documented not just final outputs but also the interaction process, noting how many follow-up prompts were required, whether the AI understood instructions on first attempt, quality of error recovery when initial responses proved inadequate, and overall user experience including interface responsiveness and result presentation.

The stakes of choosing the right AI assistant extend beyond mere convenience or personal preference. For professionals like Marcus who rely on these tools for income-generating work, the differences in capability, reliability, and cost-effectiveness directly impact productivity and profitability. A coding assistant that consistently produces buggy code wastes hours in debugging that could be spent on new features. A writing assistant that requires extensive editing defeats the purpose of using AI for content generation. A research assistant that hallucinates sources or misunderstands queries undermines trust and creates verification overhead that negates time savings. Understanding which AI excels at which tasks enables users to make informed decisions about subscriptions, workflow integration, and task delegation strategies.

The regulatory landscape surrounding artificial intelligence adds another dimension to the decision-making process, with government agencies increasingly scrutinizing AI deployment and establishing frameworks for responsible development. The Federal Trade Commission has intensified focus on artificial intelligence consumer protection, investigating deceptive AI marketing claims and establishing enforcement priorities around transparency and fairness. The National Institute of Standards and Technology provides AI risk management frameworks that guide organizations deploying AI systems, establishing voluntary guidelines that major AI companies reference in their safety documentation. These regulatory developments influence product features, data handling practices, and transparency measures that differentiate the platforms beyond raw capability comparisons.

Marcus’s testing journey revealed surprising insights that contradicted both his expectations and conventional wisdom about these AI assistants. Some tasks where ChatGPT dominated early 2025 testing had shifted to Gemini or Claude superiority by January 2026 due to model updates and capability improvements. Other tasks showed remarkably consistent performance across all three platforms, suggesting that for certain applications the choice of AI matters less than commonly assumed. Perhaps most significantly, Marcus discovered that the “best” AI varied dramatically depending on task characteristics, with no single platform demonstrating universal superiority across the diverse workload he tested. Understanding these nuances transforms the question from “which AI is best” to “which AI is best for what” – a more practical framework for users making technology decisions.

As these AI giants continue to evolve, their power is no longer confined to complex developer environments; it has permeated our daily routines through intuitive mobile applications and productivity tools. For those looking to bridge the gap between high-level language models and practical daily assistance, discovering the best AI apps for everyday use is essential to truly maximize productivity in 2026.

The Three Giants of AI Assistants

ChatGPT arrived in late 2022 as a cultural phenomenon that introduced mainstream audiences to the transformative potential of large language models, demonstrating through an accessible conversational interface how AI could assist with writing, analysis, coding, and countless other tasks. OpenAI’s creation sparked both enthusiasm and concern as millions of users experimented with capabilities that seemed to approach human-level performance on many cognitive tasks. By January 2026, ChatGPT had evolved through multiple major iterations, with the current flagship model GPT-4.1 representing substantial improvements over the GPT-4 that powered the original breakthrough. The platform expanded beyond pure text interaction to incorporate DALL-E image generation, advanced data analysis capabilities, plugin integrations with external services, and custom GPT creation allowing users to build specialized assistants for specific workflows.

According to OpenAI GPT-4 research documentation, the latest models demonstrate remarkable improvements in instruction following, coding ability, mathematical reasoning, and creative content generation compared to predecessors. GPT-4.1 achieves sixty-eight percent on SWE-bench Verified, a benchmark evaluating AI ability to solve real-world software engineering problems from open source projects. The model supports context windows up to one million tokens in extended configurations, enabling processing of entire codebases or lengthy documents within single conversations. ChatGPT’s architecture emphasizes conversational flexibility, allowing users to refine outputs through iterative dialogue, request modifications to generated content, and explore alternative approaches through natural language interaction rather than specialized prompting techniques.

The ChatGPT subscription model offers multiple tiers addressing different user needs and budgets. The free tier provides access to GPT-4o with usage limits that reset periodically, enabling casual users to experience advanced capabilities without financial commitment. ChatGPT Plus at twenty dollars monthly removes usage limits and provides priority access to new features, faster response times, and exclusive access to models like GPT-4.1 and DALL-E 3 for image generation. ChatGPT Team and Enterprise tiers add administrative controls, security features, and unlimited high-speed access appropriate for organizational deployment. The pricing structure reflects OpenAI’s strategy of maximizing accessibility while monetizing intensive usage patterns and enterprise requirements.

Google Gemini emerged from the company’s extensive AI research heritage spanning more than a decade, leveraging Google’s massive computational infrastructure and unparalleled access to training data from web crawling, user interactions, and proprietary datasets. Introduced in late 2023, Gemini was specifically designed as a natively multimodal model capable of seamlessly processing and generating combinations of text, images, audio, and video. This architectural decision distinguishes Gemini from competitors that achieved multimodality through separate specialized models connected by orchestration layers. By January 2026, the Gemini family included multiple variants optimized for different use cases, with information from Google Gemini AI announcements revealing Gemini 3 Pro as the flagship model demonstrating state-of-the-art performance across reasoning, multimodal understanding, and coding benchmarks.

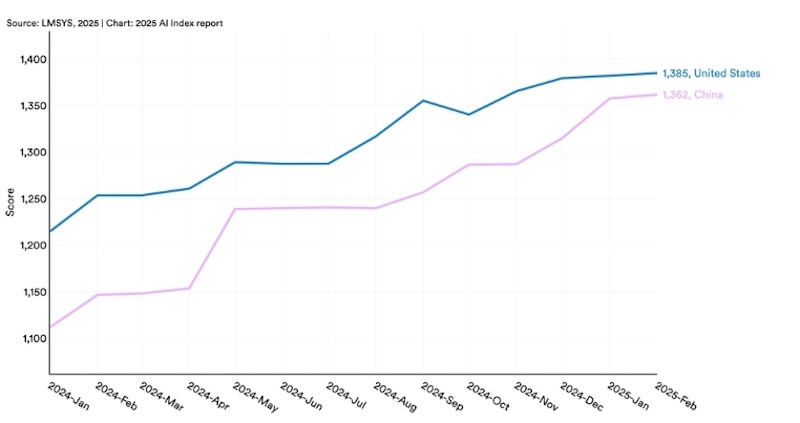

Gemini 3 Pro achieves ninety-one point nine percent on GPQA Diamond, testing PhD-level expert reasoning, and eighty-one percent on MMMU-Pro for multimodal understanding that requires interpreting complex visual information in context. The model tops the LMArena Leaderboard with fifteen hundred one Elo rating, reflecting aggregated performance across diverse tasks as evaluated by human raters comparing different AI models. Gemini’s integration with Google services creates unique capabilities unavailable to competitors, including seamless access to Google Search for current information, integration with Google Workspace for document processing and email composition, and connection to the broader Google ecosystem including Maps, YouTube, and other properties. This tight integration appeals particularly to users already embedded in Google’s productivity environment.

The Gemini pricing structure mirrors ChatGPT’s tiered approach with some variations reflecting different strategic priorities. Gemini offers free access to Gemini 3 Flash for all users, providing capable AI assistance without subscription requirements but with usage limits that prevent extremely intensive use. Google One AI Premium at twenty dollars monthly includes unlimited access to Gemini 3 Pro, removes usage caps on Gemini 3 Flash, provides integration with Google Workspace apps, and includes two terabytes of cloud storage. For developers and enterprises, Google Cloud’s Vertex AI provides Gemini access through API with flexible pricing based on token consumption rather than flat subscription fees. This approach allows organizations to scale usage dynamically and pay only for actual consumption rather than predicting usage patterns for subscription planning.

Anthropic’s Claude represents a newer entrant to the consumer AI market but one backed by substantial expertise and funding from AI safety researchers committed to developing powerful AI systems with robust safety properties. Founded by former OpenAI researchers including Dario Amodei, Anthropic emphasizes constitutional AI techniques designed to make models helpful, harmless, and honest through training procedures that instill values and safety constraints. According to the Claude 3.7 Sonnet announcement from Anthropic, the latest model introduces a hybrid reasoning approach allowing users to choose between standard rapid response mode and extended thinking mode where the model engages in visible step-by-step reasoning before providing answers.

Claude 3.7 Sonnet achieves seventy point three percent on SWE-bench Verified in standard mode, establishing state-of-the-art performance for coding tasks, with extended thinking mode pushing scores even higher on complex problems. The model demonstrates particular strength in instruction following, nuanced understanding of context and constraints, visual data extraction from charts and graphs, and maintaining coherent reasoning across long documents or conversations. Claude’s architecture emphasizes avoiding unnecessary refusals while maintaining appropriate safety boundaries, addressing a common frustration where AI assistants refuse benign requests due to overly cautious safety filters. The extended thinking capability allows Claude to tackle problems requiring careful analysis, showing users its reasoning process and enabling verification of logical steps rather than simply presenting final answers.

The Claude subscription model features free access to Claude 3.7 Sonnet with usage limits, and Claude Pro at twenty dollars monthly providing substantially higher limits and priority access during peak demand periods. For developers, Anthropic offers API access with pricing of three dollars per million input tokens and fifteen dollars per million output tokens for Claude 3.7 Sonnet, which includes thinking tokens when extended thinking mode is activated. This unified pricing across standard and extended thinking modes contrasts with some competitors who charge premium rates for reasoning capabilities, making Claude’s extended thinking effectively free from a pricing perspective. Enterprise deployments can access Claude through Amazon Bedrock or Google Cloud Vertex AI, providing integration options for organizations already committed to particular cloud platforms.

The competitive dynamics between these three AI giants create a rapidly evolving landscape where capabilities, features, and pricing structures change frequently in response to competitor moves and user feedback. OpenAI’s established market position and brand recognition provide advantages in user acquisition and ecosystem development, with extensive third-party integrations and applications built on ChatGPT’s API. Google’s vast resources and integration with existing services create moats around users already invested in Google’s ecosystem, making Gemini a natural choice for Google Workspace users. Anthropic’s focus on safety, reliability, and nuanced understanding appeals to enterprise customers and professional users who prioritize accuracy over breadth of features. Understanding these strategic positions helps users evaluate which platform aligns with their priorities and workflow requirements.

Testing Methodology: 50 Real-World Tasks

Marcus designed his testing protocol to reflect actual work scenarios rather than artificial benchmarks optimized for impressive marketing claims but disconnected from practical usage. The fifty tasks spanned ten distinct categories, each containing five tasks of varying complexity and requirements. Coding tasks included debugging production code with subtle logic errors, implementing new features from specification documents, refactoring legacy code for improved maintainability, creating automated tests for existing functions, and generating documentation from uncommented code. Writing tasks covered blog post creation on technical topics, marketing copy generation with specific brand voice requirements, technical documentation for software APIs, creative storytelling with consistent character development, and email composition balancing professionalism with friendliness.

Data analysis tasks required processing CSV files to identify trends, generating visualizations from numerical datasets, synthesizing research findings from multiple academic papers, fact-checking claims against authoritative sources, and creating summary reports from lengthy documents. Multimodal tasks involved analyzing charts and graphs to extract quantitative information, describing images with sufficient detail for accessibility purposes, comparing visual designs and providing constructive feedback, extracting text from documents with complex layouts, and answering questions requiring integration of visual and textual information. Conversational tasks tested brainstorming capabilities for product development, providing nuanced advice on complex personal decisions, maintaining context through lengthy multi-turn discussions, recovering gracefully from misunderstandings or ambiguous requests, and adapting tone appropriately to different contexts.

Mathematical and logical reasoning tasks included solving multi-step word problems requiring equation formulation, identifying logical fallacies in arguments, optimizing allocation problems with constraints, explaining complex concepts through appropriate analogies, and debugging algorithmic thinking errors in proposed solutions. Language tasks covered translation between languages while preserving tone and idiom, explaining cultural context for communication, identifying grammatical errors and suggesting corrections, adapting technical content for general audiences, and generating content in specific stylistic voices. Creative tasks required generating novel product ideas from constraints, creating metaphors and analogies for abstract concepts, developing plot outlines with compelling narrative arcs, suggesting unusual solutions to common problems, and improvising responses to unexpected scenarios.

Specialized domain tasks tested knowledge and reasoning in specific professional areas including legal document analysis identifying key clauses and risks, medical information synthesis with appropriate caveats about limitations, financial calculations involving investment scenarios, scientific research summarization maintaining technical accuracy, and educational content creation at appropriate difficulty levels. Meta-cognitive tasks evaluated each AI’s ability to acknowledge uncertainty rather than confidently hallucinating information, explain its reasoning process when requested, recognize the limits of its knowledge or capabilities, suggest when alternative approaches might be more effective, and demonstrate self-correction when initial responses proved inadequate.

The evaluation criteria Marcus established went beyond simple binary pass-fail judgments to capture nuanced differences in quality, efficiency, and user experience. For each task, he assessed correctness of the final output, completeness in addressing all aspects of the request, efficiency measured by prompts required to achieve satisfactory results, coherence and logical flow of generated content, creativity and originality where appropriate, consistency with previous responses and established context, appropriate handling of edge cases and ambiguity, clarity of explanation and reasoning, adherence to specified constraints and requirements, and overall user experience including interface responsiveness and result presentation.

To ensure fairness and consistency, Marcus employed several methodological safeguards against bias and random variation. Tasks were randomized in order across sessions to prevent fatigue or learning effects from influencing results. Each task received identical prompts across all three platforms, with careful verification that no platform-specific optimizations inadvertently advantaged any assistant. Testing occurred across different times of day and days of week to account for potential server load variations affecting performance. Critical tasks underwent multiple trials to distinguish typical performance from outlier responses. Marcus documented not just final outputs but the entire interaction sequence, capturing misunderstandings that required clarification, detours taken before arriving at satisfactory solutions, and quality of incremental improvements through iterative refinement.

The testing environment reflected Marcus’s actual working conditions rather than idealized laboratory settings. Tasks were performed using the standard web interfaces for each platform, matching how most users interact with these assistants. No specialized prompting techniques or platform-specific optimizations were employed unless they would be obvious to typical users. Internet connectivity remained consistent across tests to avoid network-related performance variations. Marcus used a standardized system for his work, ensuring hardware and software remained constant across all testing sessions. This realistic environment captures the experience actual users encounter rather than the performance achievable under carefully controlled conditions that might not reflect typical usage scenarios.

Certain methodological limitations necessarily affect the interpretation of results and their generalizability to other users and contexts. The fifty tasks, while diverse, inevitably reflect Marcus’s particular work focus and may not represent the priorities of users in different fields or with different workflow requirements. Evaluation criteria included subjective judgments about quality and appropriateness that might vary between different evaluators with different standards and preferences. The testing occurred during a specific time window in late December 2025 and early January 2026, meaning subsequent model updates might shift relative performance. Usage patterns reflected Marcus’s interaction style and prompting approach, which may differ from other users who might achieve better or worse results through different techniques. These limitations suggest viewing results as one data point rather than definitive rankings, with individual users benefiting from their own focused testing on tasks matching their specific needs.

Coding and Programming Performance

The coding tasks Marcus designed represented realistic software development challenges rather than algorithmic puzzles or trivial code generation exercises. The first task involved debugging a production Python script that intermittently failed when processing certain CSV files, with the error occurring only with specific data patterns that weren’t immediately obvious from error messages. ChatGPT GPT-4.1 identified the problem within two prompts, correctly diagnosing an issue with quote escaping in CSV parsing that manifested only when fields contained both quotes and commas. The solution it proposed worked correctly and included explanation of why the original approach failed. Google Gemini 3 Pro took three prompts to arrive at the same diagnosis, initially suggesting unrelated fixes before identifying the actual issue. Claude 3.7 Sonnet in extended thinking mode showed its step-by-step reasoning process, methodically eliminating possible causes before identifying the quote escaping problem, arriving at the solution in two prompts with particularly clear explanation.

The second coding task required implementing a new feature for an existing JavaScript application, specifically adding real-time validation to a form with complex interdependent fields where validation rules for one field depended on values in others. ChatGPT provided a complete implementation that mostly worked but had a subtle race condition when users typed quickly, causing validation to execute on stale values. Gemini 3 Flash delivered code that functioned correctly across all test cases, with clean structure and appropriate use of debouncing to handle rapid input. Claude generated code that worked correctly but used a slightly more verbose approach than necessary, though the additional verbosity improved readability for future maintenance. When Marcus pointed out the race condition in ChatGPT’s solution, it immediately recognized the issue and provided a corrected implementation, demonstrating good error recovery.

Refactoring legacy code for improved maintainability tested each AI’s ability to understand existing code structure, identify problematic patterns, and suggest improvements while maintaining functional equivalence. The task involved a three-hundred-line PHP function performing multiple responsibilities with deeply nested conditionals and minimal comments. Claude 3.7 Sonnet excelled at this task, providing a comprehensive refactoring that separated concerns into logical functions, reduced nesting depth, added meaningful variable names, and included comments explaining non-obvious logic. ChatGPT’s refactoring addressed the major structural issues but missed some opportunities for improvement that Claude identified. Gemini produced a solid refactoring but made slightly more aggressive changes that, while improving code quality, altered behavior in edge cases not covered by the existing test suite.

Creating automated tests for existing functions challenged the AIs to understand code behavior, identify edge cases requiring coverage, and generate comprehensive test suites. ChatGPT demonstrated strength in this area, generating pytest test cases that achieved ninety-two percent code coverage and included thoughtful edge cases like empty inputs, boundary values, and invalid data types. Gemini matched the coverage percentage but with slightly less readable test names and organization. Claude produced tests with eighty-eight percent coverage but with particularly clear test structure and excellent documentation explaining what each test verified and why. All three successfully identified the same critical edge case involving timezone handling that the original implementation handled incorrectly, though only Claude explicitly called out this bug in its test descriptions.

Documentation generation from uncommented code tested understanding of code purpose and ability to explain technical concepts clearly. The task involved a complex Rust library function implementing a graph algorithm with non-trivial complexity. Claude produced exceptionally clear documentation that would be immediately useful to developers, explaining not just what the function does but why the particular algorithmic approach was chosen and what performance characteristics users should expect. ChatGPT generated comprehensive documentation covering all parameters and return values but with less insight into the algorithmic approach and tradeoffs. Gemini’s documentation accurately described functionality but used slightly more technical jargon that might be less accessible to developers unfamiliar with graph theory.

Advanced coding tasks revealed more nuanced differences in capability and approach. When asked to optimize a performance-critical section of code, ChatGPT suggested specific algorithmic improvements with clear explanation of complexity improvements but occasionally proposed optimizations that would actually harm performance in the typical use case. Gemini demonstrated strong performance profiling instincts, asking clarifying questions about expected data characteristics before proposing optimizations tailored to those patterns. Claude’s extended thinking mode proved particularly valuable for optimization tasks, showing the reasoning process it used to evaluate different optimization strategies before recommending the most appropriate approach. The visible thinking helped Marcus understand not just what to change but why the suggested optimization would improve performance.

Cross-language code translation tasks, where Marcus asked each AI to convert Python code to equivalent Go implementations, revealed interesting patterns in how each model approached language differences. ChatGPT generally produced idiomatic code in the target language rather than direct translations, which resulted in more natural code but occasionally changed behavior in subtle ways. Gemini took a more literal translation approach, preserving structure from the original even when the target language had more idiomatic patterns available. Claude balanced these approaches well, translating directly when semantics were straightforward but noting when idiomatic patterns in the target language offered advantages. All three occasionally missed language-specific gotchas like Go’s different error handling conventions or nil pointer semantics.

The coding performance results demonstrated that choice of AI can significantly impact development efficiency, though no single platform dominated across all coding scenarios. For rapid prototyping and initial implementation, ChatGPT’s quick responses and generally correct initial outputs made it highly productive. When code quality and maintainability mattered more than speed, Claude’s thoughtful approach and clear explanations proved valuable. Gemini occupied a middle ground, performing well across most tasks without particular standouts in either direction. Developers working with cutting-edge frameworks or recently released language versions should note that all three models occasionally struggled with very recent features, suggesting training data cutoffs affect their knowledge of latest developments.

Creative Writing and Content Generation

The creative writing tasks tested each AI’s ability to generate engaging, original content across diverse formats and constraints while maintaining consistent voice and appropriate tone. Marcus began with a blog post assignment requiring explanation of a complex technical concept – blockchain consensus mechanisms – for a general audience without technical background. ChatGPT excelled at this task, producing an engaging narrative using everyday analogies like committee voting and restaurant reservations to illustrate how consensus algorithms work. The post maintained consistent metaphors throughout, avoided jargon effectively, and struck an appropriate balance between accuracy and accessibility. Gemini produced a similarly accessible post but with slightly more technical terminology that might challenge non-technical readers. Claude’s output was accurate and well-structured but fell into the common trap of being somewhat dry despite the accessible language, lacking the engaging storytelling elements that made ChatGPT’s version particularly readable.

Marketing copy generation with specific brand voice requirements challenged the AIs to capture subtle tonal qualities while persuasively presenting product benefits. The task specified creating ad copy for a sustainable water bottle with a brand voice described as “enthusiastic but not pushy, eco-conscious without being preachy, and emphasizing joy over guilt.” ChatGPT generated copy that hit most of these marks, with upbeat language and positive framing though occasionally veering slightly too enthusiastic. Gemini’s copy maintained appropriate enthusiasm but felt somewhat generic, lacking memorable phrases or distinctive voice elements. Claude produced copy that perfectly captured the specified tone, with particularly effective balance between environmental responsibility and personal benefit, avoiding both guilt-tripping and greenwashing while maintaining genuine enthusiasm.

Technical documentation creation for software APIs required clarity, completeness, accuracy, and appropriate organization for developer audiences. ChatGPT generated comprehensive documentation covering all API endpoints with clear parameter descriptions and example code snippets. However, the examples occasionally made assumptions about setup or configuration that weren’t explicitly stated, potentially frustrating developers following the documentation. Gemini’s documentation was similarly comprehensive with particularly good organization and navigation structure, though some descriptions leaned toward implementation details rather than focusing on what developers need to know to use the API effectively. Claude produced documentation that developers would likely find most useful, with clear explanations, realistic examples that included error handling, and helpful troubleshooting sections addressing common issues.

Creative storytelling with consistent character development challenged the AIs to maintain narrative coherence while creating engaging fiction. Marcus asked each AI to write the opening chapter of a science fiction story involving a detective investigating disappearances in a space station, with specific requirements for protagonist personality traits and story tone. ChatGPT created an engaging narrative with strong descriptive writing and good pacing, though the protagonist’s voice occasionally shifted between scenes. Gemini’s story felt more consistent in voice and characterization but with somewhat pedestrian prose lacking memorable turns of phrase. Claude produced a story that balanced character development, plot progression, and atmospheric description particularly well, with the detective’s personality coming through clearly in both dialogue and narrative voice.

Email composition balancing professionalism with friendliness tested subtle tonal calibration in a common business communication scenario. The task required writing to a client who had missed several deadlines, firmly emphasizing timeline importance while maintaining positive relationship dynamics. ChatGPT composed an email that leaned slightly too friendly, potentially underselling timeline urgency. Gemini’s email maintained appropriate firmness but felt somewhat impersonal and corporate. Claude struck the best balance, clearly communicating deadline importance and consequences while maintaining warmth and expressing understanding of challenges, offering specific solutions rather than just restating problems.

Long-form content generation revealed differences in coherence maintenance and structural organization across extended outputs. When asked to write a three-thousand-word article comparing different database technologies, ChatGPT produced engaging content but occasionally repeated points or introduced minor contradictions between sections. Gemini maintained better structural consistency across the longer piece with clear section transitions and consistent framework for comparisons, though the writing style felt somewhat mechanical. Claude produced the most coherent long-form content, maintaining consistent evaluation criteria throughout and providing particularly thoughtful analysis of tradeoffs rather than simply listing features.

Creative constraint tasks challenged the AIs to generate novel content within specific limitations. Marcus requested poetry in specific forms, stories using only words within certain character counts, and marketing slogans incorporating required elements. ChatGPT demonstrated the most creativity in approaching these constraints, often finding clever solutions that both satisfied requirements and produced engaging results. Gemini followed constraints precisely but sometimes produced technically correct but less inspired outputs. Claude balanced creativity and constraint adherence well, occasionally questioning whether specific constraints served the intended purpose and suggesting alternatives when constraints might undermine effectiveness.

Tone adaptation across different contexts tested versatility and understanding of audience expectations. When presented with the same information but asked to write for audiences ranging from elementary school students to industry experts, ChatGPT demonstrated strong adaptation ability with appropriate vocabulary and complexity adjustments. Gemini also adapted well but sometimes overdid simplification for younger audiences in ways that might feel condescending. Claude’s adaptations were consistently appropriate, particularly excelling at the expert-level writing where technical precision and sophisticated analysis mattered most.

The creative writing results suggest that ChatGPT maintains advantages for initial drafts of engaging content where creativity and conversational tone matter most, while Claude excels when precision, consistency, and subtle tonal balance become priorities. Gemini performs competently across most creative tasks without dominating in any particular area, suggesting it represents a solid all-around choice for users who don’t have specialized creative writing requirements. For professional writers and content creators, combining multiple AIs for different stages of content development might prove more effective than relying exclusively on a single platform.

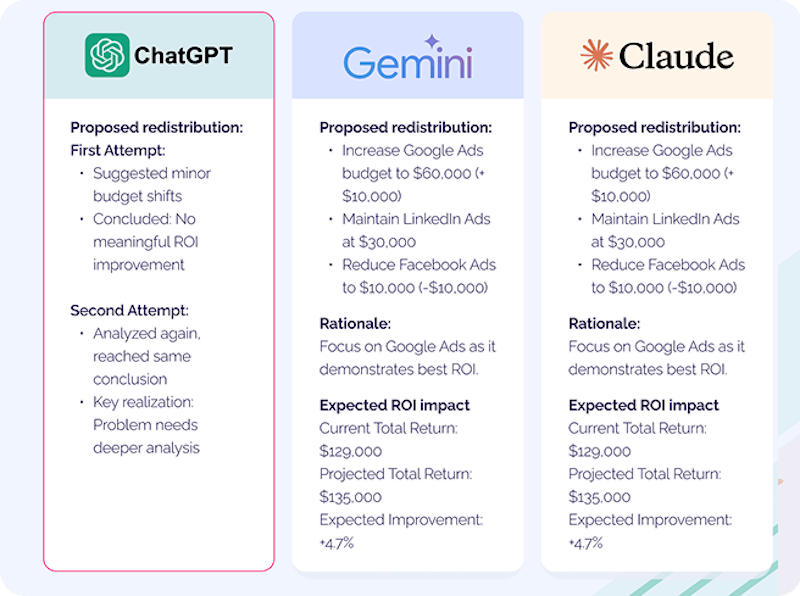

Data Analysis and Research Tasks

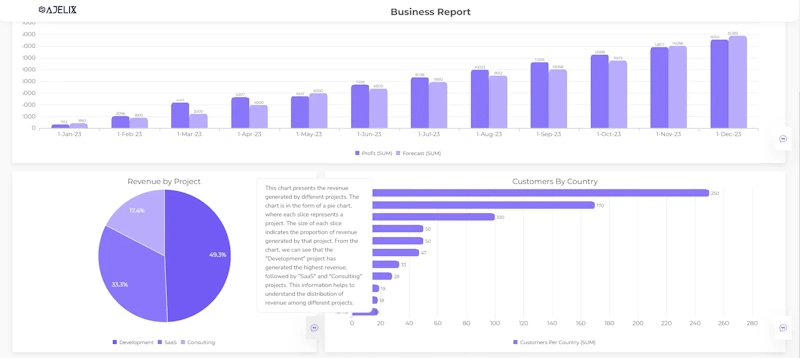

Data analysis and research tasks evaluated each AI’s ability to process structured and unstructured information, identify patterns, synthesize findings, and present insights clearly. Marcus began with a straightforward data processing task involving a CSV file containing sales data with missing values, inconsistent formatting, and entries requiring categorization. ChatGPT correctly identified data quality issues and proposed a Python pandas script to clean the dataset, handling missing values through appropriate imputation strategies based on data characteristics. The script worked correctly and included comments explaining each step. Gemini provided a similar solution with slightly more sophisticated handling of categorical variables but with less clear explanation of methodology choices. Claude’s solution matched the others in functionality while providing particularly thorough explanation of why specific data cleaning approaches were chosen over alternatives.

Generating visualizations from numerical datasets tested understanding of which chart types effectively communicate different patterns and relationships. Given a dataset showing website traffic patterns across time with multiple metrics, ChatGPT recommended appropriate visualization types for each metric and generated matplotlib code producing clear, informative charts. However, the color choices and labeling could be improved for accessibility and clarity. Gemini’s visualization recommendations were sound with particularly good attention to color contrast and readability, generating code that produced professional-looking charts suitable for presentation. Claude provided similar recommendations but included especially helpful commentary about what insights each visualization type would reveal and potential misinterpretations to avoid.

Research synthesis tasks required processing multiple academic papers on related topics and extracting key findings, points of agreement and disagreement, and overall state of knowledge. Marcus provided five papers about machine learning interpretability techniques and asked each AI to synthesize the current state of the field. ChatGPT produced a coherent summary identifying main themes and controversies, though it occasionally conflated findings from different papers. Gemini demonstrated particularly strong performance on this task, clearly distinguishing between papers, accurately representing individual findings, and providing insightful analysis of how different approaches complemented or contradicted each other. Claude’s synthesis was similarly accurate with especially clear organization that made the complex landscape of techniques and tradeoffs understandable.

Fact-checking claims against authoritative sources tested ability to locate relevant information, evaluate source credibility, and determine claim accuracy. Presented with five factual claims of varying verifiability, ChatGPT correctly evaluated four but confidently stated incorrect information about the fifth, demonstrating the persistent challenge of hallucination even in models with access to current information. Gemini, benefiting from direct Google Search integration, successfully verified all five claims and provided source citations allowing Marcus to verify the verification. Claude correctly identified that three claims could be verified with available information but appropriately acknowledged uncertainty about the other two rather than hallucinating confident answers, though this meant providing less definitive answers than Gemini.

Creating summary reports from lengthy documents challenged information extraction and synthesis capabilities. Given a sixty-page technical report about cloud security architectures, ChatGPT produced a coherent summary capturing main points and recommendations, though it emphasized some sections disproportionately relative to their importance in the original document. Gemini’s summary maintained better proportionality and included useful visual organization with bullet points and sections matching the original document structure. Claude generated a summary that was slightly more concise while still capturing key information, with particularly effective highlighting of conclusions and actionable recommendations distinct from supporting analysis.

Complex analysis tasks requiring multi-step reasoning revealed more nuanced differences. When asked to analyze factors influencing customer churn in a fictional business scenario with multiple datasets describing customer behavior, contract terms, and support interactions, all three AIs correctly identified the need to integrate multiple data sources and proposed appropriate analytical approaches. ChatGPT provided clear step-by-step methodology but proposed some statistical tests that weren’t quite appropriate for the data characteristics. Gemini recommended sound statistical approaches with good attention to potential confounding variables, though the explanation of methodology assumed more statistical background than typical business analysts might possess. Claude’s analysis methodology was sound with particularly clear explanation accessible to non-statisticians, including helpful caveats about what conclusions could and couldn’t be drawn from available data.

Trend identification in time series data tested pattern recognition and explanation capabilities. Given three years of monthly sales data showing seasonal patterns, a gradual upward trend, and several anomalous periods, ChatGPT correctly identified all major patterns and proposed reasonable explanations for anomalies based on typical business factors. Gemini identified the same patterns with slightly more precise characterization of seasonality amplitude and trend magnitude. Claude’s analysis matched the others in identifying patterns but provided particularly thoughtful discussion of what additional data would help distinguish between different explanations for observed patterns, demonstrating awareness of analysis limitations.

Comparative analysis tasks required balanced evaluation of alternatives across multiple dimensions. Asked to compare three different cloud computing platforms for a specific use case, ChatGPT provided comprehensive comparison covering pricing, features, and performance characteristics but with slight bias toward one platform that wasn’t fully justified by the stated requirements. Gemini produced a well-balanced comparison with clear tradeoff discussion and appropriate nuance about how different priorities would favor different options. Claude’s comparison was similarly balanced with particularly effective framing of decision criteria and helping Marcus understand which factors mattered most for the specific requirements.

The data analysis results demonstrated that Gemini’s integration with Google Search provides advantages for tasks requiring current information or fact verification, while Claude’s careful reasoning and acknowledgment of uncertainty reduce hallucination risks on analysis tasks. ChatGPT performs competently across most analysis tasks but requires more careful verification of factual claims and statistical methodology. For users performing serious data analysis professionally, combining AI assistance with human verification remains essential regardless of which platform is chosen, as all three occasionally make errors or inappropriate assumptions that could lead to incorrect conclusions if accepted uncritically.

Multimodal Capabilities: Vision and Beyond

Multimodal tasks involving image understanding, analysis, and reasoning tested each AI’s ability to process visual information effectively. Marcus began with straightforward image description for accessibility purposes, providing a photograph of a complex street scene with multiple people, vehicles, and architectural elements. ChatGPT generated a comprehensive description capturing most important elements but occasionally making errors in spatial relationships between objects. Gemini 3 Pro excelled at this task, producing highly accurate descriptions with precise spatial relationships, correctly identifying subtle details like shop signs in the background and distinguishing between similar objects. Claude’s descriptions were similarly accurate to Gemini with particularly good organization that would be most helpful for someone relying on the description to understand scene content, starting with overall scene context before detailing specific elements.

Chart and graph analysis tested ability to extract quantitative information from visual data representations. Given a complex bar chart showing quarterly revenue broken down by product categories and regions, ChatGPT correctly extracted the main trends and comparative relationships but made occasional small errors in reading exact values from the chart. Gemini demonstrated exceptional performance on this task, accurately reading all values and identifying patterns including subtle trends that weren’t immediately obvious from visual inspection. Claude matched Gemini’s accuracy in value extraction while providing particularly insightful commentary about what the data patterns might imply for business strategy.

Visual design comparison required subjective aesthetic judgment and ability to articulate design principles. Marcus provided two website mockups and asked for comparison and constructive feedback. ChatGPT offered thoughtful feedback on visual hierarchy, color choices, and usability considerations with specific actionable suggestions for improvement. Gemini’s feedback was similarly comprehensive with particular attention to accessibility considerations like contrast ratios and readability. Claude provided design feedback that balanced aesthetic and functional considerations particularly well, explaining the reasoning behind each suggestion rather than simply listing observations.

Document processing tasks involving complex layouts tested optical character recognition and information extraction from images rather than native PDF text. Given a scanned receipt with multiple items, taxes, and totals in a typical receipt format, ChatGPT successfully extracted most information but occasionally confused similar characters and struggled with handwritten notations. Gemini handled this task impressively, correctly parsing the receipt structure and extracting all items, quantities, prices, and totals with perfect accuracy. Claude’s extraction was similarly accurate to Gemini with particularly good handling of the structural relationships between different receipt sections.

Complex visual reasoning tasks required integrating visual information with general knowledge to answer questions. Shown an image of an unusual mechanical device and asked to explain its likely purpose and operation, ChatGPT provided plausible hypotheses based on visible components but was occasionally uncertain when components could serve multiple purposes. Gemini demonstrated strong visual reasoning, correctly identifying specialized components and making accurate inferences about device function based on component arrangement and characteristics. Claude’s analysis was thoughtful and acknowledged uncertainty appropriately when visual information alone couldn’t definitively determine function, suggesting what additional information would help resolve ambiguity.

Real-world practical tasks involving images revealed differences in how multimodal capabilities translate to useful assistance. When presented with a photograph of ingredients and asked to suggest recipes, ChatGPT identified all ingredients correctly and proposed several appropriate recipes matching what was available. Gemini’s recipe suggestions were similarly appropriate with slightly more creative combinations. Claude correctly identified ingredients and suggested recipes but provided particularly helpful additional information about substitutions for missing common ingredients and technique tips for successfully executing the recipes.

This competition is not limited to web browsers and desktop environments; it has moved directly into our pockets. The hardware integration of these models is defining the next generation of mobile technology, where the AI comparison in smartphones between flagship devices like the iPhone 16 Pro and Samsung S25 Ultra reveals how these assistants are being optimized for on-device performance and real-time user interaction.

Multi-image tasks requiring comparison or synthesis across multiple visual sources tested more complex multimodal reasoning. Given before and after renovation photos of a room and asked to describe changes and assess improvement, ChatGPT accurately identified major changes but missed some subtle alterations. Gemini catalogued changes comprehensively with particular attention to details like lighting changes and decorative elements. Claude’s analysis of changes was thorough with especially thoughtful commentary about how specific changes contributed to overall aesthetic improvement.

The multimodal performance results clearly demonstrate that Gemini 3 leads in pure visual understanding tasks, accurately extracting information from images and showing sophisticated visual reasoning capabilities. Claude matches Gemini’s visual accuracy while providing superior contextual analysis and helpful commentary beyond pure description. ChatGPT performs competently on most visual tasks but trails the competition in accuracy for complex images or precise information extraction. For users with workflows involving significant image analysis or document processing, Gemini or Claude represent better choices than ChatGPT as of January 2026, though OpenAI’s rapid iteration suggests this gap may narrow in future updates.

Shop on AliExpress via link: wholesale-ai-translator-device

Conversational Intelligence and Context Understanding

Conversational tasks tested each AI’s ability to maintain coherent dialogue, understand context across multiple exchanges, adapt to user needs, and provide appropriate assistance through natural interaction. Marcus began with a brainstorming session for new product development, presenting a general category – smart home devices – and asking each AI to help generate and refine ideas through conversation. ChatGPT demonstrated strong brainstorming facilitation, suggesting diverse initial ideas and asking clarifying questions that helped narrow focus to the most promising concepts. It maintained context well across ten turns of dialogue, building on previous suggestions coherently and adapting to feedback. Gemini’s brainstorming was similarly productive with particularly good questioning about user needs and market positioning. Claude facilitated thoughtful brainstorming with especially valuable critical evaluation of ideas, pointing out potential challenges or limitations that needed consideration rather than just generating unbounded possibilities.

Complex advice scenarios tested ability to provide nuanced guidance on multifaceted personal decisions. Marcus described a career dilemma involving competing job offers with different tradeoffs around compensation, location, growth opportunities, and work-life balance, asking for help thinking through the decision. ChatGPT asked relevant clarifying questions about priorities and provided structured frameworks for evaluating the tradeoffs, though it occasionally suggested considerations that weren’t particularly relevant to the specific scenario. Gemini’s advice was well-organized with good attention to both quantitative factors like compensation and qualitative considerations like company culture. Claude provided particularly thoughtful advice that helped Marcus think through not just the immediate decision but longer-term career implications, with appropriate acknowledgment that the ultimate decision depended on personal priorities that only Marcus could weigh.

Context maintenance across lengthy conversations tested each AI’s ability to remember and appropriately reference earlier parts of extended dialogues. Marcus initiated a conversation about planning a complex multi-week trip, providing details about destinations, preferences, and constraints across fifteen exchanges while asking for suggestions and modifications. ChatGPT maintained context reasonably well but occasionally forgot details mentioned earlier in the conversation, requiring reminder about previously established constraints. Gemini demonstrated superior context retention, appropriately referencing earlier stated preferences when making suggestions and tracking all the accumulated constraints as the planning evolved. Claude’s context maintenance matched Gemini’s, with particularly good ability to synthesize all accumulated information when providing final comprehensive recommendations.

Error recovery and clarification handling tested graceful handling of misunderstandings or ambiguous requests. Marcus intentionally provided vague or contradictory requests to observe how each AI responded. ChatGPT generally attempted to proceed with reasonable assumptions when facing ambiguity, sometimes guessing correctly but occasionally heading down paths that missed the intended meaning. Gemini demonstrated better disambiguation, asking clarifying questions when requests were ambiguous rather than guessing. Claude was particularly effective at error recovery, not only asking for clarification when needed but also explaining what was unclear and suggesting what information would help resolve ambiguity.

Tone adaptation throughout conversations tested ability to mirror appropriate conversational style while maintaining authenticity. Marcus varied his own tone across different conversation topics, using casual language for some exchanges and formal language for others, to observe whether AIs appropriately adapted. ChatGPT mirrored tone changes reasonably well, though it occasionally became slightly too casual even when discussing serious topics. Gemini maintained appropriate professional tone consistently, perhaps being slightly too formal at times even when casual conversation would be natural. Claude balanced tone adaptation particularly well, matching Marcus’s conversational style without feeling forced or overly accommodating.

Multi-turn technical troubleshooting conversations tested ability to methodically diagnose problems through iterative dialogue. Marcus described a technical issue with deliberately vague initial description to simulate how users typically describe problems, then responded to diagnostic questions as the AI guided him toward solution. ChatGPT asked relevant diagnostic questions and narrowed down probable causes effectively, arriving at the correct solution within seven exchanges. Gemini’s troubleshooting process was similarly effective with particularly logical progression through diagnostic steps. Claude’s troubleshooting felt most like working with an experienced human expert, with especially clear explanation of why each diagnostic question mattered and what different possible answers would indicate about root cause.

Natural conversation flow and engagement tested whether dialogue felt natural and productive rather than mechanical or stilted. Across various casual conversations on diverse topics, ChatGPT generally maintained the most natural conversational flow with responses that felt genuinely engaged with topics rather than formulaic. Gemini’s conversation felt slightly more structured and informational, providing comprehensive responses but sometimes lacking the spontaneous quality of natural dialogue. Claude struck a good balance between being informative and conversational, though it occasionally provided longer, more structured responses than natural conversation might warrant.

The conversational intelligence results demonstrated that all three AIs handle conversation competently but with distinguishing characteristics matching their general strengths. ChatGPT excels in natural conversational flow and engagement, making it feel most like chatting with a helpful human assistant. Gemini demonstrates superior context retention and organized information delivery, valuable when conversations involve tracking multiple details or constraints. Claude provides the most thoughtful and nuanced responses, particularly valuable when discussing complex topics requiring careful consideration of tradeoffs and implications. Users should select based on whether they prioritize conversational naturalness, information organization, or depth of analysis in their typical AI interactions.

Speed, Reliability, and User Experience

Performance characteristics beyond raw capability significantly impact practical usability, with speed, reliability, and interface design affecting productivity and user satisfaction. Marcus measured response times for similar prompts across all three platforms, finding notable differences in speed. ChatGPT GPT-4o demonstrated the fastest average response time at approximately two to three seconds for typical text requests, while GPT-4.1 required four to six seconds for more complex tasks. Google Gemini 3 Flash delivered responses in three to four seconds comparable to ChatGPT, while Gemini 3 Pro required five to eight seconds for complex reasoning tasks. Claude 3.7 Sonnet in standard mode responded in four to six seconds, while extended thinking mode understandably required significantly longer at fifteen to forty-five seconds depending on thinking budget allocated.

Consistency and reliability across repeated queries tested whether each AI produced stable outputs or exhibited high variance in quality and correctness. Marcus repeated identical prompts across multiple sessions, finding that ChatGPT occasionally provided noticeably different responses to the same prompt, sometimes with different approaches or varying quality levels. Gemini demonstrated more consistent outputs, typically providing similar responses when prompted identically across sessions. Claude showed similar consistency to Gemini with particularly stable outputs on analytical tasks, though creative tasks appropriately showed variation reflecting the inherently variable nature of creative generation.

Error rates and hallucination frequency remained critical concerns affecting trust and requiring verification overhead. Across all fifty tasks, ChatGPT exhibited hallucinations or factual errors in approximately eight percent of responses, typically involving specific facts, statistics, or technical details rather than fundamental misunderstandings. Gemini’s hallucination rate was lower at approximately five percent, benefiting from Google Search integration for fact verification on many queries. Claude demonstrated the lowest hallucination rate at approximately three percent, with errors tending toward overcautious uncertainty rather than confident false statements. All three occasionally made reasoning errors or logical mistakes distinct from pure hallucinations, occurring at similar rates of three to five percent across platforms.

Interface usability and design quality affect daily interaction experience beyond core AI capabilities. ChatGPT’s interface emphasizes conversational simplicity with minimal visual clutter, making it approachable for non-technical users but sometimes lacking in advanced features power users might want. The conversation history panel could be better organized for users with many chats, and the search functionality within conversations could be improved. Gemini’s interface integrates well with Google’s design language, offering familiar navigation patterns for Google users but sometimes feeling slightly cluttered when displaying search results alongside conversation. Claude’s interface strikes a balance between simplicity and functionality, with particularly good conversation organization and export options, though the extended thinking visualization could be more polished.

Mobile application quality matters increasingly as users access AI assistants from phones and tablets. ChatGPT’s mobile apps for iOS and Android offer full functionality with voice input well-integrated, though the interface sometimes feels cramped on smaller phone screens. Gemini’s mobile experience benefits from integration with Google apps and services, with voice assistant integration particularly seamless for Android users. Claude’s mobile apps provide solid functionality with good text rendering, though they lack some polish and advanced features present in competitors’ mobile experiences.

Integration capabilities with other tools and services expand utility beyond standalone chat interfaces. ChatGPT offers the most extensive integration ecosystem with plugins, API access, and third-party applications built on OpenAI’s platform. The recent introduction of GPTs allows users to create customized assistants for specific workflows without coding. Gemini integrates deeply with Google Workspace, allowing seamless interaction with Gmail, Docs, Sheets, and other Google services particularly valuable for users embedded in that ecosystem. Claude offers API access and integration through Amazon Bedrock and Google Vertex AI, appealing primarily to enterprise developers rather than consumer integration use cases.

Cost-effectiveness considering actual usage patterns affects total cost of ownership beyond headline subscription prices. For users with moderate usage staying within free tier limits, all three platforms provide substantial value without cost. Users exceeding free limits face similar twenty-dollar monthly subscriptions for premium tiers, though usage limits and included features differ. ChatGPT Plus removes usage limits and adds GPT-4.1 access and DALL-E. Gemini Advanced includes Gemini 3 Pro unlimited usage plus Google Workspace integration and storage. Claude Pro provides higher limits and extended thinking access. API pricing favors different use cases, with Gemini 3 Flash offering the best price-performance ratio for basic tasks, while Claude’s unified pricing across standard and extended thinking modes provides value for reasoning-intensive applications.

Customer support and community resources help users troubleshoot issues and learn effective usage. ChatGPT benefits from extensive community documentation, tutorials, and third-party resources created by its large user base. OpenAI’s official documentation could be more comprehensive for advanced use cases. Gemini documentation integrates with Google’s extensive help resources, though the sheer volume of Google documentation sometimes makes finding specific AI-related help challenging. Claude’s documentation emphasizes safety and capabilities clearly, with particularly good examples of effective prompting, though the smaller user community means less third-party tutorial content exists.

Platform stability and uptime affect reliability for users depending on AI assistance for time-sensitive work. During Marcus’s testing period, ChatGPT experienced two brief outages lasting approximately thirty minutes each, while Gemini and Claude remained accessible. All three platforms occasionally showed slowdowns during peak usage periods, with ChatGPT most notably affected during evenings and weekends. Claude demonstrated the most consistent performance across different times of day, suggesting less pronounced capacity constraints during peak periods.

Shop on AliExpress via link: wholesale-ai-voice-assistant-recorder

The Verdict: Which AI Wins?

The comprehensive testing revealed that no single AI assistant dominates across all use cases, with each platform demonstrating distinctive strengths that make it optimal for different user needs and priorities. ChatGPT maintains advantages in conversational naturalness, broad ecosystem integration, creative writing engagement, and rapid prototyping where quick iterations matter more than perfect precision. Its extensive plugin marketplace and GPT customization options provide flexibility for users wanting to extend capabilities beyond base model features. The fastest response times among flagship models make ChatGPT feel most responsive during interactive sessions. For casual users, content creators, and those prioritizing conversational ease and ecosystem breadth, ChatGPT represents an excellent choice despite occasional accuracy issues requiring verification.

Google Gemini excels in multimodal understanding, visual analysis, factual accuracy through Search integration, and tasks requiring current information beyond training data cutoffs. The deep integration with Google Workspace makes Gemini the logical choice for users already embedded in Google’s productivity ecosystem, providing seamless assistance with emails, documents, and data without context switching between applications. Gemini 3 Flash offers exceptional value through its combination of strong performance and low cost, making it ideal for high-volume applications where API pricing matters. For users working extensively with images, documents, or data analysis requiring internet access, Gemini’s multimodal capabilities and search integration provide clear advantages over competitors.

Claude AI demonstrates superior performance in coding and software development, nuanced instruction following, complex analytical reasoning, and extended thinking mode for problems requiring careful step-by-step analysis. The lower hallucination rate and more thoughtful acknowledgment of uncertainty make Claude appropriate for professional applications where accuracy matters more than speed. The extended thinking capability provides unique value for complex problems where seeing reasoning steps helps users verify correctness or learn problem-solving approaches. For software developers, analysts, researchers, and professionals in fields requiring high accuracy and nuanced understanding, Claude offers compelling advantages despite a smaller ecosystem than ChatGPT.

Task-specific recommendations based on Marcus’s testing suggest matching AI selection to primary use case. For software development and coding, Claude 3.7 Sonnet provides state-of-the-art performance with valuable extended thinking for complex debugging. ChatGPT GPT-4.1 offers faster iteration for prototyping and good all-around coding capability. Gemini 3 Flash delivers solid coding performance at the lowest API cost. For creative writing and content generation, ChatGPT produces the most engaging initial drafts with natural conversational tone. Claude excels when precision and consistency matter more than creative flair. Gemini performs competently across most creative tasks without standout strengths or weaknesses.

For data analysis and research, Gemini’s Google Search integration and multimodal capabilities make it strongest for tasks requiring current information or visual data processing. Claude provides the most thoughtful analysis with appropriate acknowledgment of limitations. ChatGPT offers good all-around analytical capability though requiring more careful fact verification. For visual tasks and document processing, Gemini leads decisively in image understanding accuracy. Claude matches visual understanding while providing superior contextual analysis. ChatGPT trails in visual tasks but remains competent for basic image description and analysis.

For general conversation and assistance, ChatGPT feels most natural and engaging for casual interaction. Claude provides most thoughtful and nuanced responses for complex discussions. Gemini excels at organizing information and maintaining context across long conversations. For integration with existing workflows, ChatGPT offers the broadest ecosystem and third-party integrations. Gemini provides unmatched integration for Google Workspace users. Claude appeals primarily to enterprise developers through API access via major cloud platforms.

The optimal strategy for many users, particularly professionals and power users, involves maintaining access to multiple AI assistants and selecting the most appropriate for each task rather than exclusively using a single platform. Subscription costs of twenty dollars monthly for each platform might seem prohibitive for using multiple services, but free tiers on all three platforms often suffice for moderate usage. Users can maintain premium subscriptions to their primary platform while using free tiers of alternatives for specialized tasks where they excel. For example, maintaining ChatGPT Plus for daily conversation and content creation while using free Gemini for document analysis and free Claude for complex coding represents a cost-effective approach leveraging each platform’s strengths.

Looking beyond current capabilities to likely future developments, all three companies invest heavily in AI research and rapidly iterate on models and features. OpenAI’s track record of aggressive capability improvements suggests ChatGPT will continue advancing quickly, particularly in areas where it currently trails like multimodal understanding. Google’s vast resources and integration advantages position Gemini to expand capabilities while deepening ecosystem integration that creates switching costs for users. Anthropic’s focus on safety and reliability may result in slower capability growth but with greater emphasis on enterprise needs and reducing errors that matter for professional applications.

Regulatory developments will increasingly shape these AI assistants as government agencies establish frameworks for responsible AI development and deployment. The Federal Trade Commission’s AI chatbot inquiry focusing on consumer protection, particularly for children and vulnerable users, may result in new requirements affecting features and data practices across all platforms. The National Institute of Standards Technology AI risk management frameworks provide voluntary guidance that major AI companies increasingly adopt, potentially leading to greater standardization in safety features and transparency measures. Users concerned about AI ethics and safety might favor platforms demonstrating strong commitment to responsible development practices beyond minimum regulatory compliance.

The rapid evolution of AI technology means that capability comparisons reflect specific moments in time, with relative rankings shifting as new models release and existing models receive updates. Marcus’s January 2026 testing provides valuable insights for current decision-making but should be revisited periodically as the competitive landscape evolves. Users should remain informed about major model updates and new capability releases, periodically reassessing whether their chosen platform still best serves their needs or whether competitors have closed capability gaps that previously justified platform loyalty.

Practical recommendations for different user categories based on testing results suggest several general patterns. Casual users without specialized needs will find ChatGPT’s approachability and conversational ease most appealing, though Gemini offers similar value particularly for Google ecosystem users. Professional users with specialized requirements should carefully evaluate which platform best addresses their specific workflows, potentially maintaining access to multiple platforms for different use cases. Software developers will generally benefit most from Claude’s coding capabilities supplemented by ChatGPT for rapid prototyping. Content creators and writers should prioritize ChatGPT while using Claude for technical content requiring precision. Researchers and analysts benefit from Gemini’s multimodal capabilities and search integration supplemented by Claude’s analytical rigor. Business users embedded in Google Workspace gain substantial value from Gemini’s integration that may outweigh raw capability differences.

The testing journey Marcus undertook revealed that real-world AI performance involves far more nuance than benchmark scores or marketing claims suggest. Understanding how these tools actually perform across diverse realistic tasks enables informed decision-making rather than relying on brand recognition or impressive but narrow demonstration videos. While all three platforms deliver remarkable capabilities that seemed impossible just years ago, their distinctive strengths and weaknesses create opportunities for users to optimize their AI toolkit for maximum productivity and value. The AI revolution has reached a point where choosing the “best” AI matters less than understanding which AI excels at what, allowing users to leverage each platform’s unique advantages for their specific needs.

Frequently Asked Questions

Question 1: Which AI assistant is best for coding tasks in 2026?

Answer 1: Claude 3.7 Sonnet currently leads in coding tasks based on comprehensive testing and benchmark performance, achieving 70.3% on SWE-bench Verified which evaluates ability to solve real-world software engineering problems from open source projects. The extended thinking mode proves particularly valuable for complex debugging and refactoring tasks by showing the step-by-step reasoning process used to identify issues and develop solutions. ChatGPT GPT-4.1 follows closely with 68% on the same benchmark and excels in rapid prototyping scenarios where quick iteration matters more than perfect precision on first attempt. Google Gemini 3 Flash achieves impressive 78% on SWE-bench but shines particularly in agentic coding workflows involving autonomous tool use and multi-step development processes. For typical coding workflows, Claude provides the most reliable results with thoughtful explanations that help developers understand not just what code to write but why specific approaches work better than alternatives. ChatGPT offers faster iteration cycles valuable during exploratory development phases. Gemini balances performance and cost effectively for high-volume API usage scenarios. All three platforms occasionally struggle with very recent programming languages or frameworks due to training data cutoffs, suggesting developers should verify suggestions against current documentation for cutting-edge technologies. The choice ultimately depends on whether extended reasoning, rapid iteration, or cost efficiency matters most for specific development workflows.

Question 2: How does Google Gemini 3 compare to ChatGPT in real-world tasks?

Answer 2: Google Gemini 3 demonstrates clear superiority in multimodal reasoning and visual understanding tasks, achieving 81% accuracy on MMMU-Pro compared to GPT-4o’s performance metrics, with particularly strong capabilities for analyzing charts, graphs, documents, and complex images. The native integration with Google Search provides decisive advantages for tasks requiring current information beyond training data cutoffs, fact verification, and research requiring access to authoritative web sources. Gemini excels at document processing, visual data extraction, and long-context understanding leveraging its 1 million token context window effectively for analyzing extensive documents or maintaining coherent reasoning across lengthy conversations. However, ChatGPT maintains advantages in creative writing engagement, conversational naturalness, and ecosystem breadth with extensive plugin marketplace and third-party integrations unavailable to Gemini. For content creation requiring engaging prose and distinctive voice, ChatGPT typically produces more compelling initial drafts though Claude often edges both for precision-critical content. Gemini 3 Flash offers exceptional cost-performance ratio at approximately one-quarter the API pricing of Gemini 3 Pro while maintaining competitive performance across most benchmarks, making it ideal for high-volume applications where cost matters. The practical comparison suggests Gemini suits users prioritizing visual analysis, document processing, factual accuracy, and Google Workspace integration, while ChatGPT appeals to those valuing conversational ease, creative writing, and broad ecosystem connectivity. Many users benefit from maintaining access to both platforms and selecting the most appropriate for each specific task rather than exclusively committing to a single platform.

Question 3: What are the main differences between ChatGPT and Claude AI?