BCI Devices: Neuralink vs Non-Invasive Brain-Computer Interfaces Comparison

Table of Contents

- Introduction

- Understanding Brain-Computer Interface Fundamentals

- Neuralink Technology Deep Dive

- Non-Invasive BCI Technologies and Approaches

- Performance Comparison: Signal Quality and Control Precision

- Safety Considerations and Risk Assessment

- Cost Analysis and Accessibility

- Current Applications and Use Cases

- Future Development Trajectories

- Conclusion

- Frequently Asked Questions

Introduction

The morning Sarah Rodriguez woke up unable to move her arms and legs forever changed her perspective on technology’s role in human existence. A devastating spinal cord injury from a car accident had severed the communication pathways between her brain and body, leaving her trapped in a prison of paralyzed flesh despite having a perfectly functioning mind. For months, she watched her family struggle with grief while she felt her identity slowly dissolving, reduced from an independent software engineer to someone dependent on others for every basic need. The psychological torment of being cognitively present but physically absent drove her to research every possible avenue for restoration, and that search led her to discover a rapidly evolving field that promised something once considered impossible: the ability to control computers and machines directly with thoughts alone.

Brain-computer interfaces represent one of the most profound technological breakthroughs of the twenty-first century, offering pathways to restore function for people with paralysis, enhance human cognitive capabilities, and fundamentally transform how humans interact with digital systems. The field has accelerated dramatically since 2020, with companies like Neuralink pushing invasive electrode implantation technologies while simultaneously, researchers have refined non-invasive approaches that require no surgery whatsoever. According to the National Institutes of Health, over 450 clinical trials involving brain-computer interface technologies were registered globally by late 2025, representing a 300 percent increase from 2020 levels. The market valuation for BCI technologies reached $2.1 billion in 2025 and analysts project exponential growth to $5.2 billion by 2030 as medical applications expand and consumer wellness devices proliferate.

The dramatic expansion of brain-computer interface research reflects substantial federal investment and growing clinical recognition of these technologies’ therapeutic potential. National Institutes of Health funding has accelerated development of innovative approaches including speech restoration systems, motor function rehabilitation protocols, and cognitive enhancement strategies. Recent NIH-supported studies have demonstrated brain-computer interfaces enabling paralyzed individuals to communicate at near-natural conversation speeds, marking substantial progress toward restoring functional independence for people with severe neurological impairments.

Before delving into the details of the article, watch this video about the fundamental differences between Neuralink technology and traditional brain interfaces:

Understanding the fundamental differences between invasive systems like Neuralink and non-invasive alternatives requires examining not just technological capabilities but also surgical risks, long-term maintenance requirements, regulatory pathways, cost considerations, and practical accessibility for diverse populations. The invasive versus non-invasive debate extends beyond simple technical specifications into profound questions about acceptable risk tolerance, bodily autonomy, medical necessity, and whether the promise of enhanced performance justifies permanent alterations to human neural tissue. Research published in Nature Neuroscience demonstrates that invasive electrodes provide signal-to-noise ratios approximately 100 times better than scalp electrodes, yet non-invasive technologies have achieved remarkable breakthroughs in recent years through advanced machine learning algorithms that extract meaningful patterns from noisy signals.

Leading scientific journals have documented the rapid acceleration of brain-machine interface capabilities through systematic research advances and clinical validation studies. Nature Communications recently published groundbreaking work demonstrating non-invasive EEG-based systems achieving real-time robotic finger control at individual digit level, substantially narrowing the performance gap with invasive approaches for specific applications. These peer-reviewed studies provide crucial evidence guiding clinical adoption decisions and regulatory approval processes.

The human brain contains approximately 86 billion neurons, each capable of firing electrical signals up to 200 times per second, creating an incomprehensibly complex network of communication that somehow generates consciousness, memory, emotion, and voluntary movement. Brain-computer interfaces attempt to tap into this electrochemical symphony, translating neural firing patterns into digital commands that external devices can execute. Early BCI research in the 1970s demonstrated basic principles, but practical applications remained science fiction until the convergence of multiple technological advances: improved electrode materials and fabrication techniques, exponential growth in computational power enabling real-time signal processing, revolutionary machine learning algorithms capable of decoding complex neural patterns, and miniaturization of electronics allowing implantable or wearable devices. These factors have transformed BCIs from laboratory curiosities into increasingly viable medical interventions and commercial products.

Shop on AliExpress via link: wholesale-brain-wave-headband

The Defense Advanced Research Projects Agency has invested over $500 million in brain-computer interface research since 2010, driving innovations in both invasive and non-invasive technologies through programs like the Next-Generation Nonsurgical Neurotechnology initiative, which specifically targets development of high-performance BCIs that avoid surgical implantation. The IEEE Standards Association established working groups in 2017 to develop unified terminology, safety standards, and performance benchmarks for neurotechnologies, recognizing that standardization would prove essential for regulatory approval, clinical adoption, and consumer protection. Meanwhile, the Food and Drug Administration released comprehensive guidance documents in 2021 specifically addressing implanted brain-computer interfaces, establishing regulatory frameworks that balance innovation encouragement with patient safety protection.

International collaboration among engineers, neuroscientists, clinicians, and ethicists has proven essential for establishing brain-computer interface industry standards. The IEEE neurotechnology standardization efforts address critical gaps in terminology consistency, performance assessment protocols, data sharing formats, and safety guidelines that previously hindered comparison between different research systems and slowed clinical translation. These standards roadmaps provide frameworks enabling researchers and manufacturers to develop interoperable technologies while ensuring adequate protection for users.

Military applications of brain-computer interface technologies have driven substantial innovation in non-surgical approaches that could benefit civilian populations. The DARPA N3 program funds revolutionary research developing bidirectional brain-machine interfaces enabling service members to control unmanned systems and interact with complex digital environments through thought alone. These ambitious projects explore ultrasound, electromagnetic fields, and nanotechnology to achieve performance approaching invasive electrodes without requiring surgery, potentially transforming how millions of people with disabilities could access assistive technologies.

Healthcare providers and researchers developing brain-computer interface systems must navigate complex regulatory landscapes that significantly impact development timelines and patient access. The FDA regulatory guidance documents provide essential frameworks addressing safety considerations including biocompatibility testing, sterilization protocols, electromagnetic compatibility, software validation requirements, and clinical trial design principles specific to neural interface technologies. These comprehensive standards help ensure that innovative medical devices reach patients safely while maintaining rigorous scientific validation of therapeutic benefits.

Sarah’s journey through the BCI landscape revealed stark contrasts between available options. Neuralink promised the highest possible performance with electrode arrays featuring thousands of channels capable of recording and stimulating individual neurons with microsecond precision, potentially enabling her to control robotic limbs with thought speed and dexterity approaching natural movement. However, that promise came with requirements for multiple brain surgeries, permanent hardware implantation, ongoing maintenance procedures, unknown long-term biological responses, and costs potentially exceeding $100,000. The alternative pathway offered EEG-based headbands costing under $1,000 that required no surgery and could be removed at any time, though with significant limitations in control precision and response speed. The decision crystallized a fundamental tension in neurotechnology development: the perpetual tradeoff between maximizing performance and minimizing risk.

Understanding Brain-Computer Interface Fundamentals

Brain-computer interfaces function by establishing bidirectional communication channels between biological neural networks and external computational systems, creating what researchers describe as a “direct” connection that bypasses traditional neuromuscular pathways. When a person imagines moving their hand, specific populations of neurons in the motor cortex generate distinctive electrical activity patterns even if the actual movement never occurs due to paralysis or amputation. BCI systems detect these neural signatures through various recording methods, process the signals through sophisticated decoding algorithms, and translate the decoded patterns into control commands for external devices like computer cursors, robotic arms, wheelchairs, or communication interfaces. The reverse pathway involves stimulating specific neural populations to create artificial sensory experiences, enabling users to receive feedback from controlled devices.

The fundamental architecture of any BCI system comprises four essential components regardless of invasiveness level. The signal acquisition module captures neural activity through electrodes positioned either on the scalp surface, beneath the scalp on the brain surface, or penetrating into brain tissue itself. Signal processing modules amplify, filter, and digitize the raw electrical signals, removing artifacts from muscle movement, eye blinks, electrical interference, and other noise sources that can overwhelm the comparatively tiny voltage fluctuations generated by neurons. The decoding algorithm analyzes processed signals to identify patterns corresponding to specific intentions or mental states, employing machine learning techniques that improve accuracy through training on labeled data. Finally, the application interface translates decoded intentions into device commands and often provides sensory feedback to the user, creating a closed-loop interaction that enables skill development and improved control over time.

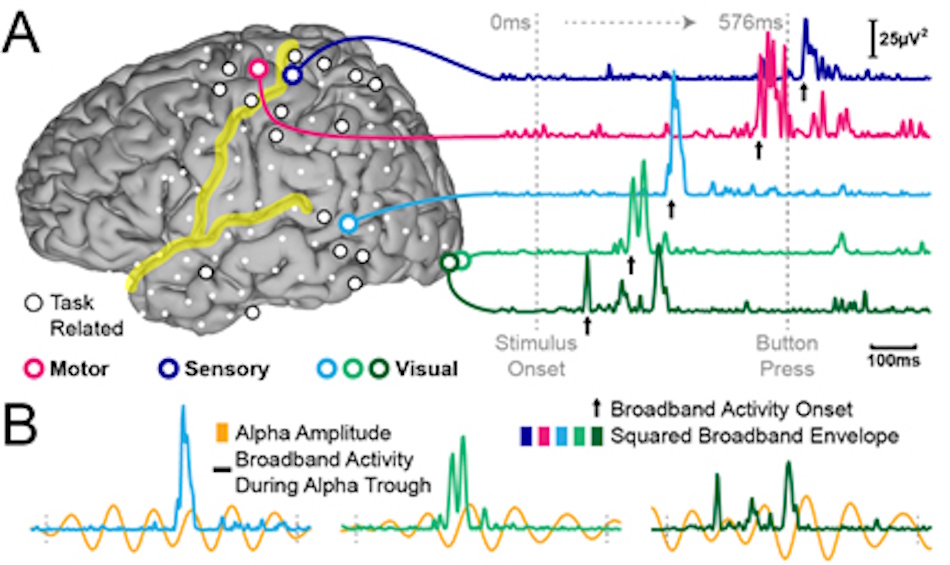

Neural electrical activity manifests across multiple frequency bands, each associated with different cognitive and motor functions. Delta waves below four hertz dominate during deep sleep stages. Theta waves between four and eight hertz appear during drowsiness and meditation states. Alpha waves from eight to thirteen hertz characterize relaxed wakefulness with eyes closed. Beta waves between thirteen and thirty hertz indicate active thinking, focus, and motor planning. Gamma waves above thirty hertz correlate with complex information processing and consciousness itself. Different BCI paradigms exploit different frequency bands and neural phenomena. Motor imagery BCIs detect changes in mu and beta rhythms when users imagine movement. P300 BCIs identify event-related potentials triggered by rare or unexpected stimuli. Steady-state visually evoked potential systems detect rhythmic brain responses to flickering visual stimuli. The choice of BCI paradigm significantly impacts achievable performance, user training requirements, and appropriate applications.

Signal quality represents perhaps the most critical factor determining BCI effectiveness, fundamentally constrained by the physical distance between electrodes and target neurons. Electrical signals generated by individual neurons measure only microvolts in amplitude and attenuate rapidly as they propagate through brain tissue, cerebrospinal fluid, skull bone, and scalp tissue. Non-invasive scalp electrodes detect aggregated activity from millions of neurons simultaneously, making it impossible to distinguish individual neural firing patterns. Invasive electrodes positioned directly in neural tissue can record from small populations or even single neurons, providing vastly superior signal resolution and specificity. However, this performance advantage comes at the cost of surgical risks, immune responses, chronic inflammation, and gradual signal degradation as scar tissue encapsulates electrodes.

Research measuring BCI performance across invasive and non-invasive approaches reveals substantial differences in key metrics. Studies published in Nature Communications demonstrate that invasive intracortical BCIs achieve cursor control speeds approaching 90 words per minute for typing tasks, compared to 10 to 20 words per minute for non-invasive EEG-based systems under optimal conditions. The information transfer rate, measuring how many bits of information per minute a BCI can reliably communicate, reaches 100 to 200 bits per minute for invasive systems while non-invasive approaches typically achieve 20 to 40 bits per minute. Control accuracy for complex multi-dimensional tasks shows similar disparities, with invasive systems reaching 85 to 95 percent accuracy compared to 60 to 75 percent for non-invasive alternatives. These performance gaps have narrowed somewhat as machine learning advances enable more effective signal processing, but fundamental physics limits how much non-invasive approaches can improve without revolutionary new recording technologies.

The temporal dynamics of brain activity pose additional challenges for BCI development. Neural populations can reorganize their activity patterns over timescales ranging from milliseconds to months, requiring BCI decoders to adapt continuously. The phenomenon called “neural drift” causes gradual changes in recorded signals even when users attempt identical movements, degrading decoder performance unless algorithms implement adaptive recalibration strategies. Environmental factors including electrode impedance changes, temperature variations, user fatigue, and cognitive state fluctuations all introduce instabilities that complicate reliable long-term BCI operation. Invasive systems face additional challenges from immune responses gradually altering electrode-tissue interfaces, while non-invasive systems must contend with variable electrode placement, hair interference, sweat affecting electrical conduction, and user head movements disrupting signal quality.

Long-term stability challenges represent critical engineering obstacles requiring innovative solutions for brain-computer interfaces to achieve reliable daily-use performance. Comprehensive reviews published in PMC examine cutting-edge approaches including closed-loop neurostimulation, flexible bioelectronics, and artificial intelligence integration addressing the complex technical challenges facing clinical BCI translation. Understanding these stability issues helps patients and clinicians set realistic expectations about current technology capabilities and anticipated future improvements.

Neuralink Technology Deep Dive

Neuralink Corporation, founded by entrepreneur Elon Musk in 2016, has pursued an aggressive development timeline aiming to create fully implantable brain-computer interfaces with unprecedented channel counts and wireless operation. The company’s N1 implant device measures approximately 23 millimeters in diameter and contains custom-designed application-specific integrated circuits capable of recording from 1,024 electrode channels simultaneously, representing a substantial increase over earlier research systems typically featuring 100 to 200 channels. These electrodes consist of thin flexible polymer threads approximately five to ten micrometers in width, far smaller than conventional rigid electrodes, designed to minimize tissue damage during insertion and reduce chronic inflammatory responses that degrade signal quality over months and years. The entire implant resides within a titanium case hermetically sealed to protect electronics from biological fluids while remaining thin enough to sit flush with the skull surface.

The surgical implantation procedure developed by Neuralink employs a custom robotic system capable of inserting individual electrode threads into cortical tissue with sub-millimeter precision while avoiding blood vessels visible through surgical microscopy. Traditional neurosurgical techniques for implanting electrode arrays require skilled surgeons working under direct visualization, limiting the density and number of electrodes that can be safely inserted. The Neuralink surgical robot can theoretically insert threads at rates approaching six threads per minute, enabling the placement of thousands of electrodes in a single surgical session that might last several hours. The company has published videos demonstrating the robotic system inserting electrodes into phantom brain models, though as of early 2026, limited information has been publicly disclosed regarding human clinical trial results beyond initial case reports involving a small number of participants.

Neuralink’s technological approach emphasizes complete wireless operation, eliminating percutaneous connectors that pierce the skin and create infection risks in earlier generation BCIs. The N1 implant communicates with external devices via Bluetooth Low Energy protocols, streaming neural data to nearby computers or smartphones while receiving power through inductive charging systems similar to wireless phone chargers. This wireless architecture theoretically enables users to operate their BCI without visible external hardware beyond the subtle implant outline beneath scalp skin. Battery life remains a practical limitation, with the device requiring overnight charging similar to consumer electronics, though the company has suggested future iterations may harvest energy from body heat or movement to extend operational duration.

The electrode technology represents one of Neuralink’s most significant innovations compared to traditional rigid electrode arrays used in research systems like those developed through BrainGate clinical trials. Rigid electrodes made from silicon or metal create mechanical mismatches with surrounding soft brain tissue, triggering immune responses that encapsulate electrodes in scar tissue and degrade signal quality progressively. Flexible polymer threads more closely match the mechanical properties of neural tissue, potentially reducing inflammatory responses and maintaining signal stability for longer periods. Animal studies using flexible electrodes have demonstrated more stable neural recordings extending beyond one year compared to rigid electrodes showing significant degradation within months. However, flexible electrodes introduce their own challenges including insertion difficulties, structural fragility, and questions about long-term durability under the constant motion and biological activity occurring within living brain tissue.

Signal processing and decoding algorithms developed by Neuralink remain largely proprietary, though the company has indicated employing machine learning approaches including recurrent neural networks capable of learning temporal patterns in neural activity and adapting to changing signal characteristics over time. The massive channel count enables recording from large neuronal populations simultaneously, providing redundant information that improves decoder robustness when individual channels degrade or fail. Advanced algorithms can potentially extract more information from these high-density recordings compared to systems with fewer channels, compensating somewhat for the lower information content per channel compared to extremely high-quality research systems employing expensive amplification electronics and signal processing infrastructure. The wireless constraint necessitates on-board signal compression before transmission, requiring careful algorithm design to preserve essential information while meeting bandwidth and power limitations.

The clinical development pathway for Neuralink has progressed through animal studies in pigs and non-human primates before receiving FDA approval for limited human trials under an Investigational Device Exemption. Early demonstrations included pigs with implants showing stable neural recordings during various activities, and a monkey apparently playing video games through neural control alone. The company announced beginning human clinical trials in 2024, initially targeting individuals with severe paralysis who might benefit from BCI-enabled communication and computer control. As with all invasive medical device trials, extensive safety monitoring tracks potential complications including infection, hemorrhage, seizures, tissue damage, immune responses, device malfunction, and any neurological or cognitive changes that might occur. The phased trial approach typically starts with small participant numbers to establish basic safety before expanding enrollment and testing more ambitious applications.

Non-Invasive BCI Technologies and Approaches

Electroencephalography has served as the foundation for non-invasive brain-computer interfaces since the technology’s inception, recording electrical activity through electrodes placed on the scalp surface. Modern EEG systems range from clinical-grade devices featuring 64 to 256 channels and medical certification for diagnostic applications, to consumer wellness headbands with 4 to 8 channels marketed for meditation assistance, sleep tracking, and focus training. The signal quality achievable through scalp electrodes represents a fundamental compromise, as electrical currents must traverse multiple tissue layers including brain matter, cerebrospinal fluid, skull bone, and scalp skin before reaching sensors. Each layer acts as a volume conductor that spatially blurs neural sources, while the skull’s low conductivity creates substantial signal attenuation. Despite these physical limitations, decades of research have established EEG as safe, affordable, and surprisingly capable for specific applications when combined with sophisticated signal processing.

The electrode technology employed in non-invasive systems significantly impacts signal quality, user comfort, and practical usability. Traditional wet electrodes require conductive gel application between electrode and scalp to reduce electrical impedance and improve signal transmission. These provide the highest signal quality among scalp recording methods but require tedious preparation, create messy residue, and experience gel drying over extended sessions that degrades performance. Semi-dry electrodes incorporate small amounts of conductive material or rely on specialized surface coatings to achieve acceptable impedance without liquid gel, offering compromise solutions between signal quality and convenience. Dry electrode technologies employ various approaches including metal pins that penetrate hair to contact scalp, spring-loaded contacts that maintain pressure against skin, or capacitive coupling that measures electrical fields without direct contact. Each approach presents different tradeoffs regarding signal quality, user comfort, setup time, and suitability for different applications and user populations.

Recent innovations in non-invasive sensing technologies aim to overcome EEG’s fundamental limitations through alternative recording modalities. Functional near-infrared spectroscopy measures blood oxygenation changes in cortical tissue by shining infrared light through the skull and detecting transmitted or reflected light. While fNIRS provides better spatial resolution than EEG and can penetrate several centimeters into cortical tissue, the technique suffers from slow temporal response since blood flow changes lag neural activity by several seconds, making it unsuitable for applications requiring rapid response. Magnetoencephalography detects magnetic fields generated by neural electrical activity, offering superior spatial resolution compared to EEG since magnetic fields aren’t distorted by skull and tissue. However, MEG requires elaborate superconducting sensor arrays operating in magnetically shielded rooms, limiting practical applications to research facilities. Hybrid systems combining EEG with fNIRS, accelerometers, heart rate monitors, or other physiological sensors attempt to leverage complementary information for improved decoding performance.

Machine learning advances have dramatically improved non-invasive BCI performance by enabling more effective extraction of information from noisy signals. Deep learning neural networks can automatically discover relevant features in raw EEG data without requiring manual feature engineering based on domain knowledge. Convolutional neural networks excel at identifying spatial patterns across electrode arrays and temporal patterns in signal sequences. Recurrent neural networks and transformers capture long-range temporal dependencies in brain activity that simpler approaches miss. Transfer learning techniques enable training models on large datasets from many users and then adapting them to new users with minimal calibration data, addressing the traditional problem that BCIs require extensive training periods for each individual user before achieving acceptable performance. These algorithmic improvements have enabled consumer EEG headbands to achieve functionality that required research-grade equipment just a decade earlier.

The practical applications achievable with current non-invasive BCI technology span several domains with varying performance requirements. Motor imagery paradigms detect when users imagine moving specific body parts, generating reliable control signals for selecting between two to four discrete options like directional commands for wheelchair navigation or communication interfaces. Attentional paradigms including P300 spellers present arrays of characters or symbols and detect brain responses when target items appear, enabling text entry at rates of 5 to 15 characters per minute for users with severe motor impairments. Steady-state evoked potential approaches can support more rapid selection between options through measuring responses to flickering visual or auditory stimuli, though requiring users to fixate attention on specific stimuli may prove fatiguing during extended use. Passive monitoring applications including meditation assistance, stress detection, sleep staging, and cognitive workload assessment place fewer demands on control precision and benefit from machine learning models trained on large datasets.

The user experience considerations for non-invasive BCIs differ substantially from invasive approaches. Scalp-based systems require donning hardware before each use session, with setup times ranging from under one minute for consumer headbands with minimal electrodes to 30 minutes for research-grade systems with gel application and impedance checking. Device comfort during extended wear becomes critical, as poorly designed headbands can cause pressure headaches or skin irritation. Visual aesthetics matter for consumer applications, with sleeker designs improving user acceptance compared to obviously technical-looking equipment. The ability to use BCIs in diverse environments rather than laboratory settings expands practical utility but introduces additional signal artifacts from user movement, electrical interference, changing lighting conditions, and other uncontrolled factors. Battery life, wireless connectivity reliability, software usability, and integration with existing assistive technologies all influence whether non-invasive BCIs successfully transition from promising demonstrations to everyday tools.

Performance Comparison: Signal Quality and Control Precision

Quantitative performance metrics reveal substantial differences between invasive and non-invasive BCI approaches across multiple dimensions. Signal-to-noise ratio, measuring the amplitude of desired neural signals relative to background noise and artifacts, typically ranges from 10 to 30 decibels for intracortical electrodes recording within neural tissue. Electrocorticography electrodes placed on the brain surface beneath the skull achieve intermediate SNR around 5 to 15 decibels, while scalp EEG electrodes typically measure SNR below 5 decibels and sometimes approaching zero decibels under challenging conditions. This dramatic difference in signal quality fundamentally constrains the complexity and speed of control achievable with different recording modalities. High SNR enables reliable detection of neural activity from small populations of neurons and rapid discrimination between different mental states, while low SNR requires averaging over longer time periods or larger neural populations to extract reliable signals.

Spatial resolution represents another critical performance dimension where invasive and non-invasive approaches differ dramatically. Intracortical microelectrode arrays can record from individual neurons or small clusters containing tens of neurons, enabling highly localized measurements that distinguish fine-grained patterns of population activity. Surface ECoG electrodes aggregate activity from hundreds of thousands of neurons under each contact, losing the ability to discriminate individual neural responses but still maintaining reasonable spatial specificity. Scalp EEG electrodes detect activity from millions of neurons distributed across centimeter-scale cortical regions, producing signals that represent blurred summations of activity from large brain areas. The spatial resolution achievable influences how precisely BCIs can discriminate between similar mental states or decode complex multi-dimensional intentions required for sophisticated control tasks.

Temporal resolution, measuring how rapidly BCIs can detect changes in neural activity and respond with updated control commands, varies less dramatically across recording modalities since neural electrical activity occurs on millisecond timescales for all approaches. Intracortical systems can achieve update rates exceeding 1000 hertz, enabling extremely responsive control that approaches natural movement responsiveness. EEG systems typically operate at 250 to 500 hertz sampling rates, providing adequate temporal resolution for most applications. The practical temporal response of BCIs depends more heavily on signal processing algorithms and the time required to accumulate sufficient data for reliable decoding than on raw sampling rates. Motor imagery BCIs often require one to two seconds to confidently classify user intentions, while evoked potential approaches can respond within 300 to 500 milliseconds. These response latencies significantly impact user experience, as delays create disconnect between intention and action that disrupts the intuitive control essential for skilled performance.

Information transfer rate quantifies BCI communication bandwidth by measuring how many bits of information users can reliably convey per unit time. The theoretical maximum ITR depends on the number of distinguishable outputs the system can generate and the accuracy and speed with which users can select between them. Research systems using intracortical recordings have demonstrated ITRs exceeding 6 bits per second for cursor control tasks and potentially higher for direct neural control of robotic limbs when exploiting continuous high-dimensional neural activity patterns. Clinical ECoG systems achieve ITRs around 2 to 4 bits per second for communication applications. Non-invasive EEG systems typically reach 0.5 to 2 bits per second, though occasional demonstrations under highly optimized conditions have exceeded 3 bits per second. For context, typical speech communication transfers approximately 10 to 15 bits per second, illustrating that even the best current BCIs operate at speeds well below natural communication modalities.

Control accuracy and reliability determine whether BCIs provide useful assistance or frustrating unreliability. Invasive systems consistently achieve accuracies above 90 percent for discrete selection tasks and demonstrate smooth continuous control that enables skilled performance development with practice. Non-invasive systems show more variable performance depending on specific paradigm, individual user differences, environmental conditions, and calibration quality. Under optimal laboratory conditions with expert users and careful calibration, non-invasive BCIs can achieve 80 to 90 percent accuracy for simple binary or ternary classification tasks. However, accuracy often degrades substantially in real-world use with imperfect electrode placement, environmental interference, user fatigue, and insufficient calibration data. This reliability gap creates practical limitations where non-invasive BCIs may frustrate users attempting complex tasks that require consistent high-accuracy control.

Safety Considerations and Risk Assessment

The surgical risks associated with implanting invasive BCIs represent the most immediate and obvious safety concern distinguishing these approaches from non-invasive alternatives. Craniotomy procedures opening the skull to access brain tissue carry infection risks ranging from 1 to 5 percent in experienced neurosurgical centers, with potentially devastating consequences including meningitis, brain abscesses, or systemic sepsis requiring intensive antibiotic treatment and potentially additional surgeries. Hemorrhage during electrode placement can damage brain tissue through direct trauma or secondary effects from bleeding compressing surrounding structures, occurring in approximately 1 to 3 percent of procedures despite modern imaging guidance and surgical techniques. Postoperative complications including seizures affect 5 to 10 percent of patients undergoing intracranial procedures, potentially requiring antiepileptic medications and monitoring. These acute risks must be carefully weighed against potential benefits when considering invasive BCI implantation.

The chronic biological responses to implanted electrodes pose long-term risks that may not manifest until months or years after surgery. Foreign body reactions trigger immune responses that recruit inflammatory cells to electrode surfaces, gradually encapsulating devices in scar tissue that electrically insulates electrodes from surrounding neurons and degrades signal quality. Studies of implanted electrode arrays in research animals and human clinical trial participants document progressive signal loss over timescales ranging from months to years, though substantial individual variability exists and some electrodes maintain function for extended periods. The chronic inflammation surrounding electrodes may cause localized tissue damage through release of inflammatory mediators, potentially affecting nearby healthy neurons. Electrode materials can corrode or degrade over time within the harsh biological environment, raising concerns about toxic material release, mechanical failure, or electrical malfunctions that could cause unintended neural stimulation.

Device longevity and replacement requirements create additional risk considerations for invasive BCIs. All implanted electronics have finite operational lifespans limited by battery degradation, component failures, material fatigue, or encapsulation in scar tissue. Current invasive BCI systems have operated successfully for 5 to 10 years in some research participants, but longer-term data remains limited and technological obsolescence likely exceeds device physical longevity. Replacing failed or obsolete implants requires additional surgeries with their attendant risks, and explanting devices risks removing scar tissue attached to electrodes that could damage brain tissue during extraction. The prospect of requiring multiple brain surgeries over a lifetime makes long-term risk assessment particularly challenging, as cumulative surgical risks compound while potential benefits must justify exposing patients to repeated procedures.

The psychological and cognitive effects of invasive BCIs warrant careful consideration despite limited long-term data. Some research participants have reported changes in personality, emotional regulation, or cognitive function following electrode implantation, though establishing causation versus coincidence remains challenging with small participant numbers and multiple confounding factors. Concerns exist that chronic electrical stimulation or recording might alter neural circuit function beyond intended BCI operations, potentially affecting processes unrelated to the targeted motor or sensory systems. The long-term effects of having electronic devices chronically interfacing with neural tissue remain largely unknown, with animal studies providing imperfect models for human outcomes across decades of exposure. Ethical considerations arise regarding appropriate risk tolerance for enhancement applications versus medical necessities, and whether permanent brain modifications create obligations for long-term monitoring and support.

Non-invasive BCI safety profiles appear dramatically more favorable with risks limited primarily to minor skin irritation from electrode contact, potential for exacerbation of seizure disorders in rare cases when using visual stimulation paradigms, and psychological distress if performance expectations exceed actual capabilities. The absence of surgery eliminates infection risks, hemorrhage, and traumatic brain injury. No long-term biological responses occur since devices contact only external skin surfaces rather than neural tissue. Device failures create inconvenience rather than medical emergencies, and users can simply discontinue use without medical intervention if problems arise. However, this favorable safety profile comes with the performance tradeoffs discussed previously, creating the fundamental tension between maximizing capability and minimizing risk that defines much of BCI development.

Regulatory frameworks established by agencies including the FDA provide systematic approaches to balancing safety and innovation. Invasive BCIs classified as high-risk Class III medical devices require extensive preclinical testing followed by phased clinical trials demonstrating safety and effectiveness before receiving approval for broader use. The approval process typically requires 5 to 10 years and costs ranging from tens to hundreds of millions of dollars, creating substantial barriers to commercialization that limit innovation pace but protect patients from premature deployment of inadequately tested technologies. Non-invasive consumer devices often escape stringent medical device regulation if marketed for wellness rather than medical claims, enabling faster innovation cycles but potentially exposing consumers to devices with minimal safety testing or performance validation. The regulatory landscape continues evolving as regulators grapple with rapidly advancing technologies that blur traditional boundaries between medical devices, consumer electronics, and enhancement technologies.

Cost Analysis and Accessibility

The financial barriers to accessing invasive BCI technologies remain substantial and restrict availability primarily to research participants in clinical trials rather than general patient populations. The device hardware costs for systems comparable to Neuralink likely range from $10,000 to $30,000 for materials, electronics, and manufacturing, though exact figures remain proprietary. Neurosurgical procedures required for implantation add significant costs including operating room time, surgical team expenses, anesthesia, perioperative monitoring, and postoperative care, collectively ranging from $30,000 to $70,000 in United States healthcare systems. Additional costs arise from preoperative imaging, neurological assessments, device programming, rehabilitation training, and long-term follow-up monitoring. Conservative estimates place total costs for invasive BCI access between $50,000 and $150,000 for initial implantation, with ongoing annual costs for maintenance, calibration, and potential revision surgeries. These figures far exceed what most individuals can afford without insurance coverage, yet most insurance providers currently decline coverage for investigational technologies lacking established efficacy data.

Non-invasive BCI technologies span dramatic price ranges depending on intended applications and target markets. Consumer wellness headbands marketed for meditation, sleep tracking, or focus training retail between $200 and $800, placing them within reach of middle-class consumers in developed nations though still representing significant purchases. Research-grade EEG systems featuring dozens of channels, medical-grade electronics, and sophisticated software cost $10,000 to $50,000, limiting accessibility to research laboratories, clinical facilities, and well-funded rehabilitation centers. The ongoing costs for non-invasive systems remain modest, primarily involving electrode replacement, software subscriptions, and occasional hardware repairs rather than medical procedures or specialized expertise. This dramatic cost differential makes non-invasive approaches accessible to vastly larger populations, though performance limitations restrict who can benefit from currently available capabilities.

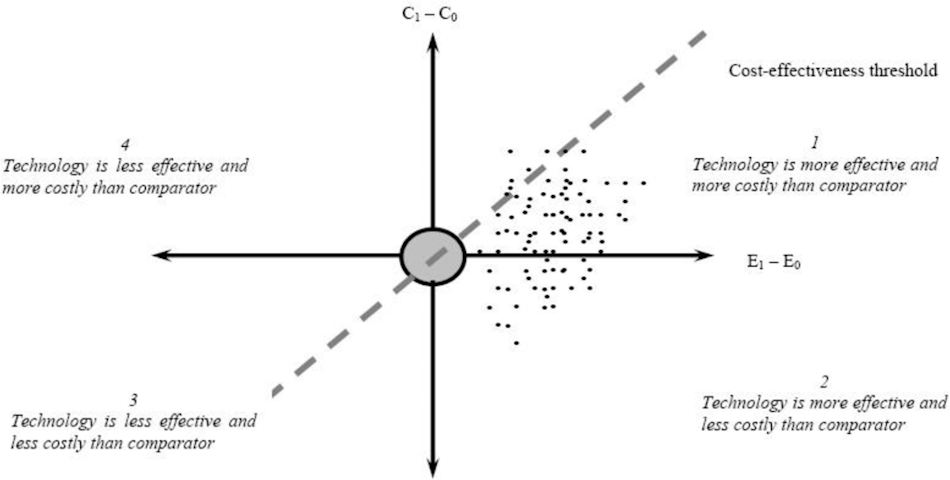

The economic considerations extend beyond direct purchase prices to encompass healthcare system costs, productivity impacts, and quality of life improvements that affect broader cost-benefit analyses. For individuals with severe paralysis unable to communicate or control their environment, successful BCI deployment could reduce caregiving requirements, enable return to employment, improve mental health outcomes, and enhance autonomy sufficient to justify substantial upfront costs. For consumer enhancement applications like improved focus or meditation assistance, the modest benefits achievable with current non-invasive technologies likely don’t justify premium pricing unless costs decline substantially. The cost-effectiveness calculations depend heavily on individual circumstances, available alternatives, and personal valuations of different outcomes.

Insurance coverage and reimbursement policies will largely determine whether invasive BCIs transition from rare research applications to mainstream medical interventions. Insurers typically require strong evidence that technologies provide clinically meaningful benefits justifying their costs compared to alternative treatments or no treatment. Establishing this evidence base requires large-scale clinical trials demonstrating that BCI users achieve functional improvements in activities of daily living, communication abilities, or other objectively measurable outcomes. The regulatory approval process validates safety but doesn’t necessarily establish cost-effectiveness, creating separate hurdles for achieving widespread reimbursement. Some healthcare systems employ health technology assessment frameworks that explicitly evaluate costs per quality-adjusted life year gained, setting thresholds that technologies must meet to warrant funding. Non-invasive consumer devices bypass these insurance considerations entirely by selling directly to consumers, though this limits their potential for transformative impact on populations with severe disabilities who most need BCI assistance but cannot afford out-of-pocket expenses.

Federal agencies have recognized the need for specialized regulatory frameworks addressing unique challenges posed by brain-computer interface devices. The Federal Register published detailed guidance on implanted BCI devices for patients with paralysis, establishing nonclinical testing recommendations and clinical study design considerations. These official government documents provide transparency about regulatory expectations and help manufacturers plan development pathways that efficiently address safety concerns while advancing innovation.

Global accessibility considerations reveal stark disparities between regions with advanced healthcare infrastructure and developing nations where even basic medical technologies remain scarce. The specialized expertise, surgical facilities, and technological infrastructure required for invasive BCIs exist primarily in major medical centers within wealthy nations, creating geographic barriers that limit access regardless of financial resources. Non-invasive technologies with minimal setup requirements and no medical procedure dependencies could theoretically deploy more broadly, though cost barriers and limited awareness constrain actual availability. International development organizations and philanthropic funding could potentially subsidize BCI deployment in resource-limited settings if technologies prove sufficiently beneficial and costs decline substantially, but near-term reality involves profound accessibility inequalities that concentrate benefits in already advantaged populations.

Current Applications and Use Cases

Communication restoration for individuals with locked-in syndrome or severe motor impairments represents the most compelling medical application for both invasive and non-invasive BCIs. Research participants with amyotrophic lateral sclerosis unable to speak or move their limbs have used invasive intracortical BCIs to achieve typing speeds approaching 40 words per minute through imagined handwriting movements, enabling relatively natural communication despite complete paralysis. Non-invasive P300 spellers allow users to compose messages by attending to desired characters in visual arrays, achieving more modest speeds around 5 to 15 characters per minute but without surgical requirements. These communication BCIs profoundly impact quality of life by restoring agency, enabling expression of needs and preferences, maintaining social connections, and preserving cognitive engagement that degrades when individuals lose all communication channels. The differences in performance between invasive and non-invasive approaches become especially meaningful for users whose condition may progress rapidly, making every additional word per minute of communication speed valuable.

Motor function restoration through BCI-controlled neuroprosthetics has demonstrated remarkable capabilities in research settings. Intracortical BCIs enable paralyzed individuals to control robotic arms with sufficient dexterity to perform activities including feeding themselves, manipulating objects, and even playing video games through decoded movement intentions. More ambitiously, systems combining BCIs with functional electrical stimulation of paralyzed muscles have enabled tetraplegic individuals to regain limited use of their own hands and arms by routing decoded motor commands from the brain directly to muscles bypassing injured spinal cord pathways. Non-invasive BCIs have enabled wheelchair control, computer cursor manipulation, and basic robotic control, though typically limited to selecting between discrete options rather than smooth continuous movements. The gap between invasive and non-invasive performance becomes particularly evident in motor applications where timing precision and multi-dimensional control critically determine functional utility.

Sensory restoration applications attempt to create bidirectional BCIs that not only decode motor intentions but also deliver sensory feedback by stimulating appropriate neural populations. Invasive systems have demonstrated restoration of touch sensations from prosthetic limbs through intracortical microstimulation that creates perception of contact, pressure, and texture. Visual prosthetics for blindness attempt to create rudimentary artificial vision by stimulating visual cortex with patterns corresponding to camera images, though current capabilities remain far from natural vision and create only simplified perception of edges and shapes. Auditory BCIs bypass damaged ear structures to restore hearing through direct stimulation of auditory pathways, with cochlear implants representing the most successful neural prosthetic technology deployed at scale. Non-invasive approaches to sensory feedback have explored transcutaneous electrical stimulation, visual displays, and auditory cues as alternatives to direct neural stimulation, providing less naturalistic sensations but avoiding implantation requirements.

Mental health applications represent emerging domains where both invasive and non-invasive BCIs show preliminary promise. Deep brain stimulation, while not strictly a BCI since it provides continuous stimulation rather than closed-loop control, has demonstrated efficacy for treatment-resistant depression and obsessive-compulsive disorder through modulation of limbic circuit activity. Closed-loop BCIs detecting neural signatures of seizures could enable responsive stimulation that prevents epileptic episodes before they fully develop. Non-invasive neurofeedback training using EEG enables individuals to learn voluntary control over specific brain rhythms, with applications proposed for anxiety reduction, attention enhancement, and emotional regulation. The evidence base for these mental health applications remains weaker than motor and communication uses, with many studies showing modest effects and concerns about placebo responses complicating interpretation. However, the potential to address conditions affecting hundreds of millions globally creates strong motivation for continued development.

Consumer wellness applications have proliferated as non-invasive EEG headbands have become affordable and marketed for general audiences. Meditation assistance apps use real-time brainwave monitoring to provide feedback on relaxation states, supposedly helping users develop better meditation skills through quantified tracking. Focus training applications claim to enhance concentration abilities through neurofeedback sessions that reward sustained attention states. Sleep tracking headbands monitor sleep stages throughout the night, providing detailed reports on sleep architecture that users can employ to optimize sleep hygiene behaviors. The scientific evidence supporting these consumer applications varies substantially, with some grounded in legitimate neuroscience research while others make exaggerated claims unsupported by rigorous validation studies. The accessibility and low risk of these applications creates value even if effects prove modest, though regulatory gaps raise concerns about predatory marketing and unrealistic consumer expectations.

Future Development Trajectories

The technological roadmap for invasive BCIs focuses on addressing current limitations including electrode longevity, device miniaturization, wireless power transfer, and biosafety improvements. Flexible polymer-based electrodes continue advancing with designs that better match brain tissue mechanical properties, potentially reducing chronic inflammation and extending functional lifespans to decades rather than years. Novel materials including graphene, conducting polymers, and nanostructured surfaces promise improved biocompatibility and electrochemical properties that maintain signal quality despite biological encapsulation responses. Wireless power transfer technologies borrowing from consumer electronics could eliminate battery replacement requirements, enabling truly permanent implants that never require removal for charging. Integration of closed-loop recording and stimulation capabilities within single devices could enable more sophisticated control schemes including sensory feedback, error correction, and adaptive algorithms that continuously optimize performance.

Machine learning advances will likely drive substantial performance improvements for both invasive and non-invasive BCIs through more effective decoding algorithms and reduced calibration requirements. Current deep learning approaches require extensive training data from individual users before achieving optimal performance, creating barriers to practical deployment and limiting functionality during initial use periods. Transfer learning and few-shot learning techniques could enable BCIs to achieve good performance with minimal calibration by leveraging models pretrained on large multi-user datasets. Unsupervised learning approaches that discover latent structure in neural data without requiring labeled training examples could enable BCIs to adapt automatically to changing signal characteristics without explicit recalibration sessions. Reinforcement learning paradigms might allow BCIs and users to co-adapt through interaction, jointly optimizing decoding strategies and user control strategies to maximize overall performance.

Non-invasive sensing technologies may see revolutionary improvements that narrow performance gaps with invasive approaches. Focused ultrasound techniques show promise for modulating deep brain activity non-invasively, potentially enabling both recording and stimulation through acoustic interactions with neural tissue. Optogenetic approaches combining genetic modification with optical stimulation require viral vector delivery but avoid permanent hardware implantation, potentially offering performance approaching invasive electrodes with reduced surgical requirements. High-density electrode arrays featuring hundreds or thousands of scalp sensors could extract more information from EEG signals through improved spatial resolution, though practical considerations around setup time and user comfort present challenges. Hybrid systems combining complementary recording modalities like EEG and fNIRS could leverage strengths of each to achieve better overall performance than either alone.

The convergence of BCIs with artificial intelligence creates intriguing possibilities for augmented intelligence applications beyond medical restoration. Brain-computer interfaces could enable direct information transfer from digital systems into human memory, potentially allowing rapid learning of languages, skills, or factual knowledge through neural pattern induction. Bidirectional communication between multiple users’ brains could create novel forms of collaborative cognition or shared experiences impossible through conventional communication channels. Integration with artificial intelligence systems could create cognitive prosthetics that enhance reasoning, creativity, or decision-making beyond normal human capabilities. These speculative enhancement applications raise profound ethical questions about identity, fairness, human nature, and whether society should embrace technologies that alter fundamental aspects of human cognition. The boundary between therapy and enhancement remains fuzzy, with technologies developed for medical needs potentially creating pressure for adoption by healthy individuals seeking competitive advantages.

The convergence of brain-computer interfaces with artificial intelligence represents part of broader technological transformation reshaping how humans interact with digital systems. Comprehensive guides exploring the best AI apps and tools for everyday artificial intelligence use reveal the expanding ecosystem of smart technologies enhancing productivity, creativity, and decision-making capabilities. Understanding these AI developments provides essential context for anticipating how brain-computer interfaces might eventually enable more seamless human-machine collaboration as both technologies continue maturing.

Regulatory frameworks will need substantial evolution to address emerging BCI capabilities and applications. Current medical device regulations focus primarily on safety and efficacy for specific indicated uses, providing limited guidance for enhancement applications that blur medical and consumer boundaries. International standards for BCI performance assessment, reporting protocols, and safety benchmarks remain under development despite growing commercial activity and clinical deployments. Privacy protections for neural data warrant attention given the sensitivity of information about cognitive states, intentions, and potentially even thoughts that BCIs might eventually decode. Liability frameworks must address who bears responsibility when BCIs malfunction or cause harm, particularly as algorithms become more complex and autonomous. The interplay between innovation incentives, patient protection, equity concerns, and ethical considerations will shape how aggressively societies embrace BCI technologies versus maintaining cautious restraint.

Shop on AliExpress via link: wholesale-eeg-headset

Conclusion

The comparison between Neuralink-style invasive brain-computer interfaces and non-invasive alternatives reveals fundamental tradeoffs rather than clear superiority of either approach. Invasive systems provide unmatched signal quality, control precision, and functional capabilities that enable sophisticated applications including high-speed communication and dexterous robotic control, coming at substantial costs including surgical risks, unknown long-term effects, maintenance requirements, and financial barriers restricting accessibility. Non-invasive approaches offer safety, affordability, and accessibility while accepting significant performance limitations that constrain applications primarily to discrete selection tasks and wellness monitoring. The appropriate choice depends entirely on individual circumstances including medical needs, risk tolerance, financial resources, and available alternatives.

For individuals with severe paralysis or communication impairments facing dramatically reduced quality of life, the risks and costs of invasive BCIs may prove justified if technologies deliver transformative restoration of lost capabilities. The performance advantages of invasive recording could mean the difference between achieving functional independence versus remaining dependent on caregivers for all basic needs. However, the still-experimental status of invasive systems, limited long-term safety data, and uncertain insurance coverage create substantial uncertainties that individuals must weigh carefully with medical guidance. Non-invasive alternatives may provide sufficient functionality for some applications while avoiding surgical risks, though their performance limitations may prove frustratingly inadequate for users accustomed to natural communication and movement speeds.

The future landscape of brain-computer interfaces will likely include diverse approaches optimized for different use cases rather than singular solutions dominating all applications. Invasive systems may become standard interventions for severe paralysis while regulatory frameworks establish safety guidelines and insurance coverage develops. Non-invasive consumer devices could proliferate for wellness applications if manufacturers avoid predatory marketing and develop genuine value propositions beyond placebo effects. Intermediate approaches including minimally invasive techniques that improve on scalp EEG performance without requiring craniotomy may fill niches balancing safety and capability. The accelerating pace of technological development, driven by substantial commercial investment and intense research activity, suggests that capabilities will expand substantially over the coming decade, potentially rendering current comparisons obsolete as both invasive and non-invasive approaches achieve breakthroughs that overcome current limitations.

Sarah’s journey through this landscape ultimately led her to enroll in a clinical trial testing an electrocorticography system that places electrodes beneath the skull but on the brain surface rather than penetrating tissue, representing a middle path attempting to balance signal quality and surgical risk. Her decision reflected personal circumstances including the severity of her paralysis, exhaustion of alternative treatments, financial support from disability insurance covering experimental procedures, and psychological need for agency restoration outweighing concerns about uncertain outcomes. Three years after implantation, she reports achieving functional computer control enabling email communication and environmental control sufficient to regulate her home temperature, lighting, and entertainment systems independently. The technology has not restored the life she had before injury, but has returned enough autonomy to make existence feel worthwhile again. Her experience illustrates both the profound promise of brain-computer interfaces and the sobering reality that current systems remain far from the seamless brain-machine symbiosis depicted in science fiction, with substantial development still required before BCIs achieve their full transformative potential.

Frequently Asked Questions

Question 1: What is the main difference between Neuralink and non-invasive brain-computer interfaces?

Answer 1: Neuralink employs surgical implantation of flexible electrode threads directly into cortical tissue, positioning sensors within millimeters of target neurons to record electrical activity with exceptionally high signal quality and temporal precision. This invasive approach requires craniotomy procedures performed by neurosurgeons, creates permanent alterations to brain tissue through electrode insertion, and necessitates ongoing medical monitoring for potential complications. The system resides entirely beneath the scalp after implantation and communicates wirelessly with external devices. In contrast, non-invasive brain-computer interfaces use external sensors placed on the scalp surface, most commonly electroencephalography electrodes that detect electrical signals after they propagate through brain tissue, cerebrospinal fluid, skull bone, and scalp skin. These non-invasive systems avoid surgical procedures entirely, allow users to don or remove devices at will, and eliminate risks associated with implanted foreign materials. The fundamental tradeoff involves accepting substantially lower signal quality and reduced control precision in exchange for eliminating surgical risks and enabling broader accessibility to wider populations without requiring medical procedures.

Question 2: Are non-invasive brain-computer interfaces safe for everyday use?

Answer 2: Non-invasive brain-computer interfaces utilizing electroencephalography technology demonstrate excellent safety profiles for regular use based on decades of clinical and research experience. These devices merely record existing electrical activity generated by neural processes rather than introducing energy or stimulation into the body, creating minimal biological risk. The primary safety concerns involve minor skin irritation from electrode contact, particularly with metal electrodes pressed firmly against scalp tissue during extended wear sessions. Users with sensitive skin may experience redness or discomfort that typically resolves quickly after device removal. Extremely rare cases of visual stimulation paradigms potentially triggering seizures in photosensitive individuals warrant caution, though modern BCI systems implement safety protocols minimizing these already minimal risks. Psychological concerns arise primarily from unrealistic performance expectations leading to frustration rather than from direct device effects. Unlike implanted devices carrying infection risks and requiring surgical removal if complications develop, non-invasive systems create no permanent alterations and users can simply discontinue use without medical intervention if any problems occur. Regulatory oversight for consumer neurotechnology products remains somewhat limited compared to medical devices, placing responsibility on users to select reputable manufacturers implementing appropriate safety testing and quality controls.

Question 3: How much does Neuralink surgery cost compared to non-invasive alternatives?

Answer 3: Neuralink has not publicly disclosed exact pricing for its brain-computer interface system, but estimates based on comparable invasive neural device procedures suggest total costs ranging from $40,000 to $100,000 for initial implantation including device hardware, neurosurgical procedure, perioperative care, and initial programming. This substantial investment encompasses specialized manufacturing of custom electrode arrays, operating room expenses for several-hour procedures requiring neurosurgical expertise, anesthesia costs, postoperative monitoring, neuroimaging studies, rehabilitation training, and ongoing calibration sessions. Additional annual costs arise from device maintenance, software updates, clinical monitoring for potential complications, and eventual replacement surgeries when devices reach end-of-life after perhaps five to ten years. Insurance coverage remains uncertain for investigational technologies lacking established medical necessity, potentially requiring patients to absorb costs entirely out-of-pocket. Conversely, non-invasive EEG headbands range from approximately $200 for basic consumer meditation devices to $3,000 for advanced multi-channel systems marketed for serious neurofeedback applications. Research-grade equipment with medical certification costs $10,000 to $50,000 but primarily serves laboratory and clinical settings. The ongoing expenses for non-invasive systems remain minimal, primarily involving occasional electrode replacement and software subscription fees. This dramatic cost differential makes non-invasive approaches accessible to middle-class consumers in developed nations, while invasive systems remain restricted primarily to research participants in clinical trials receiving subsidized or free access.

Question 4: What applications can current non-invasive BCIs handle effectively?

Answer 4: Non-invasive brain-computer interfaces demonstrate reliable functionality for applications tolerating their inherent limitations in signal quality, control precision, and response speed. Neurofeedback training applications enabling users to learn voluntary control over specific brainwave patterns show consistent evidence for stress reduction, attention enhancement, and relaxation promotion when delivered through proper training protocols. Sleep monitoring systems accurately classify sleep stages throughout the night by detecting characteristic EEG patterns associated with rapid eye movement, light sleep, and deep sleep phases, providing actionable insights for optimizing sleep hygiene behaviors. Meditation assistance applications offer real-time feedback on mental states during meditation practice, helping users recognize when attention wanders and reinforcing sustained focus states. Basic wheelchair navigation becomes possible through motor imagery paradigms detecting intended movement directions from brain patterns, though typically limited to selecting between four to eight discrete commands. Communication systems for individuals with severe motor impairments utilize attention-based selection paradigms enabling text entry at modest speeds sufficient for expressing basic needs and maintaining social connections. Gaming applications provide entertainment while requiring only low-bandwidth control signals that non-invasive BCIs can reliably generate. Research applications benefit from non-invasive recordings for studying cognitive processes, attention mechanisms, and neural correlates of behavior without subjecting participants to surgical risks. These applications collectively demonstrate that non-invasive BCIs provide genuine utility despite their performance limitations compared to invasive alternatives.

Question 5: Does Neuralink offer better performance than all non-invasive options?

Answer 5: Neuralink’s invasive electrode approach provides substantially superior signal quality, temporal precision, spatial resolution, and control capabilities compared to current non-invasive brain-computer interfaces across virtually all quantifiable performance metrics. The proximity of Neuralink electrodes to target neurons enables recording of action potentials from small neural populations, detecting subtle variations in firing patterns that scalp electrodes cannot distinguish through layers of intervening tissue. This signal quality advantage translates into faster response times, higher information transfer rates, more accurate decoding of user intentions, and capability for smooth continuous control of multi-dimensional devices rather than discrete option selection. Published research demonstrates invasive BCIs achieving typing speeds approaching natural handwriting rates, robotic arm control with dexterous manipulation approaching biological movement, and communication bandwidths substantially exceeding non-invasive alternatives. However, characterizing this performance advantage requires important caveats. Recent algorithmic advances have enabled non-invasive systems to achieve functionality once requiring invasive recording by more effectively extracting information from noisy signals through machine learning techniques. For applications not requiring extreme precision or rapid response including meditation assistance, sleep tracking, and basic communication, non-invasive approaches may provide sufficient performance without justifying invasive procedures. The performance gap continues narrowing as non-invasive technologies improve, though fundamental physics limits ultimately constrain how much scalp recordings can approach intracortical signal quality.

Question 6: What are the major risks associated with invasive BCIs like Neuralink?

Answer 6: Invasive brain-computer interfaces carry substantial medical risks inherent to neurosurgical procedures and long-term implantation of foreign materials within neural tissue. Acute surgical complications include infection risks ranging from 1 to 5 percent depending on facility expertise and patient factors, potentially requiring intensive antibiotic treatment or additional surgeries to remove infected hardware. Hemorrhage during electrode insertion occurs in approximately 1 to 3 percent of procedures despite modern imaging guidance, potentially causing neurological damage through direct trauma or compression from blood accumulation. Postoperative seizures affect 5 to 10 percent of patients following intracranial procedures, sometimes requiring long-term antiepileptic medications. Chronic risks emerge from biological responses to implanted materials, including foreign body reactions that progressively encapsulate electrodes in scar tissue and degrade signal quality over months to years. Unknown long-term consequences from having electronic devices permanently interfacing with neural tissue raise concerns about potential alterations to circuit function, changes in cognitive processes, or personality modifications, though limited data exists regarding decades-long exposure. Device longevity limitations necessitate eventual replacement surgeries with their attendant risks, creating cumulative exposure to procedural complications over a lifetime. Psychological impacts including anxiety about device malfunction, loss of bodily integrity, or dependencies on medical technologies create additional burdens beyond physical safety concerns. The investigational status of systems like Neuralink means long-term risk profiles remain incompletely characterized, requiring participants to accept uncertain outcomes despite rigorous safety monitoring during clinical trials.

Question 7: Can non-invasive BCIs decode complex thoughts or intentions?

Answer 7: Current non-invasive brain-computer interfaces cannot decode specific thought content, complex intentions, or detailed cognitive processes with meaningful accuracy due to fundamental limitations of scalp EEG recording. The substantial signal degradation occurring as electrical activity propagates from neural sources through brain tissue, cerebrospinal fluid, skull, and scalp creates spatial blurring that prevents discrimination of localized neural firing patterns encoding detailed information. Non-invasive BCIs reliably detect broad categories of brain states including motor imagery of different body parts, attention focused on specific stimuli, levels of mental workload, emotional arousal states, and transitions between sleep stages. These capabilities enable discrete selection between limited options and passive monitoring of general cognitive states but fall far short of reading minds or decoding natural thought processes. Recent advances in machine learning have somewhat improved decoding of motor intentions and attention states by identifying subtle patterns in noisy signals, yet fundamental physics constrains how much information can transmit through skull and tissue regardless of algorithmic sophistication. Claims by some consumer neurotechnology companies suggesting their devices read thoughts or detect lies substantially overstate current capabilities and lack scientific support. The gap between detecting general brain states and decoding specific thought content represents orders of magnitude in information complexity, requiring neural recording technologies far beyond current non-invasive approaches. Invasive intracortical recordings enable somewhat more detailed decoding of movement intentions and potentially coarse semantic categories, but even the highest-resolution current systems cannot access the detailed neural codes representing complex thoughts or read minds in the sense depicted by science fiction.

Question 8: How long do Neuralink implants last before replacement?

Answer 8: The expected operational lifespan for Neuralink-style brain-computer interface implants remains uncertain due to limited long-term human data, though comparable invasive neural devices suggest functional periods ranging from five to fifteen years before replacement becomes necessary. Multiple factors influence implant longevity including progressive electrode-tissue interface degradation as immune responses encapsulate electrodes in scar tissue reducing signal quality, corrosion or mechanical failure of electrode materials within the harsh biological environment, electronic component failures from continuous operation under temperature fluctuations and mechanical stress, and battery degradation limiting operational time between charging cycles. Research participants with earlier-generation intracortical microelectrode arrays have maintained partial functionality for over ten years in exceptional cases, though typically with substantial performance degradation from initial capabilities as increasing numbers of electrodes fail or become encased in poorly conducting scar tissue. Technological obsolescence may prove more limiting than physical device longevity, as rapid advances in electrode design, signal processing algorithms, and wireless communication protocols could make implanted systems obsolete before reaching physical end-of-life, creating pressure for replacement to access improved capabilities. The hermetic sealing required to protect electronics from biological fluids represents another critical failure point, as even microscopic breaches enable fluid ingress that rapidly destroys sensitive components and potentially exposes tissue to toxic materials or corrosion products. Replacement procedures carry similar surgical risks as initial implantation while potentially facing additional complications from scar tissue surrounding existing implants and tissue damage risks during explantation. These considerations make device longevity assessment complex, balancing physical durability against performance degradation and technological advancement rates.

Question 9: Are there hybrid approaches combining invasive and non-invasive BCI technologies?

Answer 9: Researchers have developed several innovative hybrid and minimally invasive approaches attempting to optimize the tradeoff between signal quality and procedural risk that defines the invasive versus non-invasive spectrum. Electrocorticography systems place electrode grids on the brain surface beneath the skull but avoid penetrating into neural tissue, achieving intermediate signal quality superior to scalp EEG while reducing tissue trauma compared to intracortical penetration. This approach requires craniotomy for implantation but creates less chronic inflammation since electrodes contact the protective dura mater rather than directly interfacing with neurons. Endovascular electrodes represent particularly promising minimally invasive alternatives, involving threading flexible electrode arrays through blood vessels to reach brain regions without requiring skull opening. These stentrode devices navigate via catheter insertion through femoral or jugular vessels and expand within cortical blood vessels adjacent to motor areas, recording neural activity through vessel walls with quality approaching surface electrodes. The endovascular procedure requires only local anesthesia and avoids brain surgery while maintaining some performance advantages over scalp recording. Epidural and subdural electrodes placed between skull and dura or between dura and brain respectively offer additional intermediate positions with varying invasiveness-performance tradeoffs. Hybrid systems combining non-invasive EEG with intracortical electrodes enable comparison of recording modalities and potentially leverage complementary information from different spatial scales. Some approaches attempt “micro-invasive” techniques involving tiny injections of neural dust particles or injectable electrode materials that could theoretically deploy without major surgery, though these remain largely experimental with uncertain long-term biocompatibility and functionality.

Question 10: What regulatory approvals do BCI devices need before public use?